MTC: Staying Ahead of the Curve: Why ABA Techshow Is Not Optional for Today's Practicing Lawyer

/the aba techshow is the perfect place for lawyers to learn the skills they need to know to meet aba requirements to stay abreast of the benefits and risks associated with relevant technOlogy used in the practice of law!

Let me be direct: technology is no longer a "nice-to-have" in legal practice. It is an ethical obligation. 🎯

The American Bar Association made that clear in 2012 when it amended Comment 8 to Model Rule 1.1 — the foundational rule governing competence. That comment explicitly states that a lawyer must "keep abreast of changes in the law and its practice, including the benefits and risks associated with relevant technology." Not someday. Not when it's convenient. Now — and continuously. If you are a practicing attorney and you are not actively engaging in legal technology education, you are not just leaving efficiency on the table. You may be skating dangerously close to an ethical violation.

That is precisely why I keep coming back to ABA TECHSHOW — and precisely why I encourage every lawyer I speak with, regardless of their comfort level with technology, to attend.

🔑 ABA TECHSHOW Is Built for You — Yes, You

I want to address something head-on: the assumption that Techshow is a conference for tech enthusiasts and IT professionals. It is not. The 2026 conference, running March 25–28 at McCormick Place in Chicago, features over 100 technology vendors and programming explicitly designed for lawyers at every skill level — including those who still break into a cold sweat opening a new software interface. The sessions span everything from AI fundamentals to cybersecurity to practice management to video communication. There is a deliberate on-ramp built into the conference structure because the organizers understand that the legal profession is diverse in its relationship with technology.

I have been privileged to serve as a speaker and faculty member at TECHSHOW, and this year is no exception. At TECHSHOW 2026, I am co-presenting two sessions that I believe speak directly to where the legal profession is right now.

The first, Podcasting for Lawyers: The Truth Behind the Mic, pulls back the curtain on how lawyers can leverage podcasting as a powerful tool for building authority, deepening client relationships, and positioning themselves as thought leaders in their practice areas. In a media landscape saturated with blogs and social media posts, a podcast gives you something rare: an intimate, sustained connection with your audience. As you know, I run my own podcast — The Tech-Savvy Lawyer.Page Podcast — and in that session, alongside colleagues and previous podcast guest Ruby Powers and hopefully future podcast guests Gyi Tsakalakis, and Stephanie Everett, we share the real, actionable steps behind building compelling legal content. 🎙️

learn how setting up a podcast studio carries over to help with other legal events!

My second session, Camera Ready Anywhere: Mastering Video Meetings with Clients, Courts, and Colleagues, my co-presenter, Temi Siyanbade, and I explore the practical, professional, and ethical dimensions of virtual communication. As virtual meetings have become a permanent fixture of legal practice — whether you are conducting client consultations on Zoom, appearing remotely before a tribunal, or negotiating with opposing counsel on TECHSHOW — looking and sounding competent on camera is no longer optional. This session covers audio and video setup, lighting, platform best practices, and how to project professionalism in a digital environment. The irony is that many lawyers who are meticulous about their appearance in a courtroom give almost no thought to how they present themselves on a video call. That gap matters. It matters to clients. It matters to judges. And yes, it can matter to your reputation.

⚖️ The ABA Model Rules Are Not Suggestions

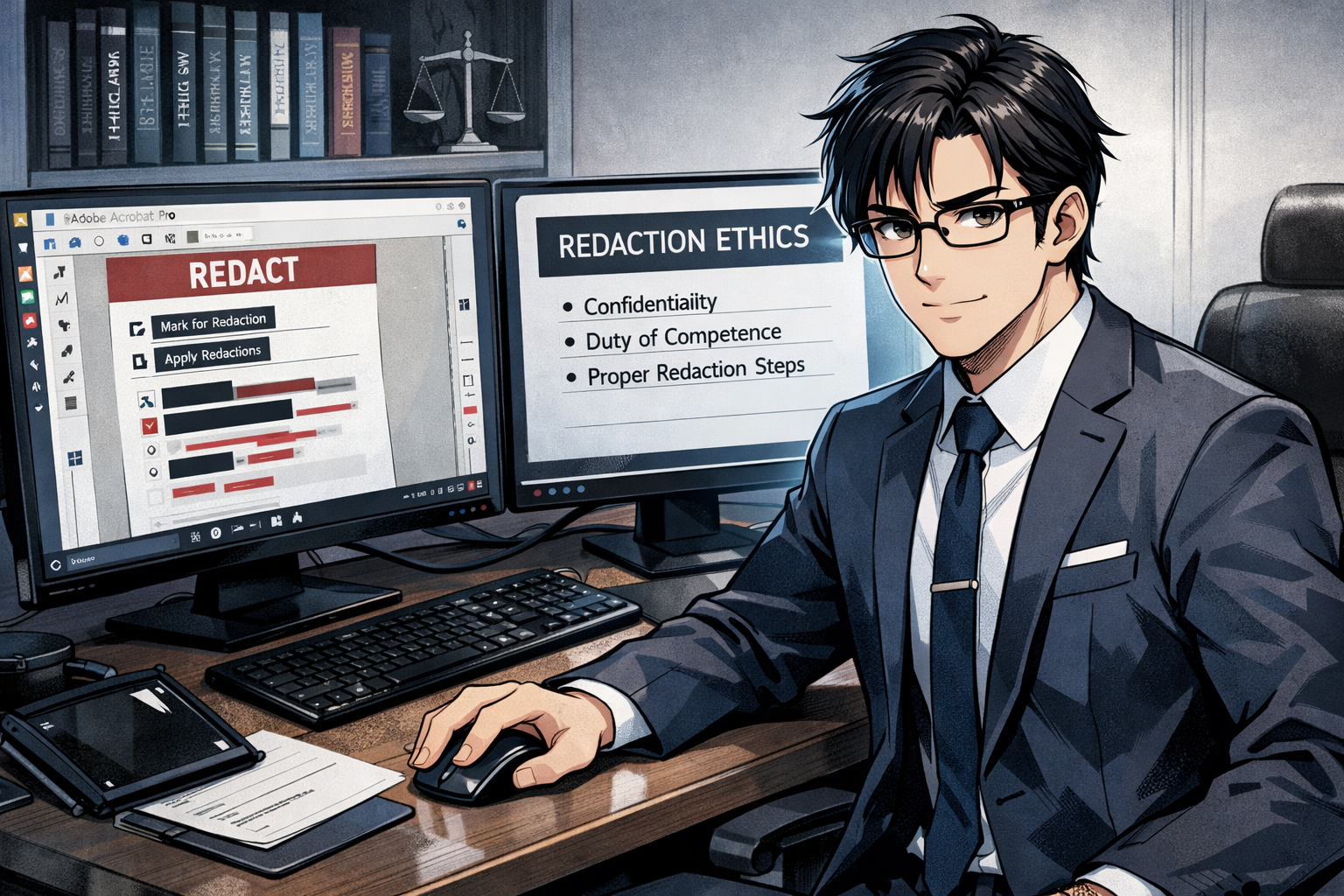

Let us return to the ethics piece, because I think it deserves more than a passing mention. ABA Model Rule 1.1 sets the standard for competent representation. Most lawyers understand this in terms of legal knowledge — knowing the law, understanding procedure, being prepared. Fewer appreciate that the ABA's 2012 amendment has extended that standard to technology.

As of today, 40-plus states have adopted some version of the technology competence obligation articulated in Comment 8. The District of Columbia most recently joined that group in 2025. This is not a fringe interpretation. It is a growing national consensus about what it means to be a competent lawyer in the modern era.

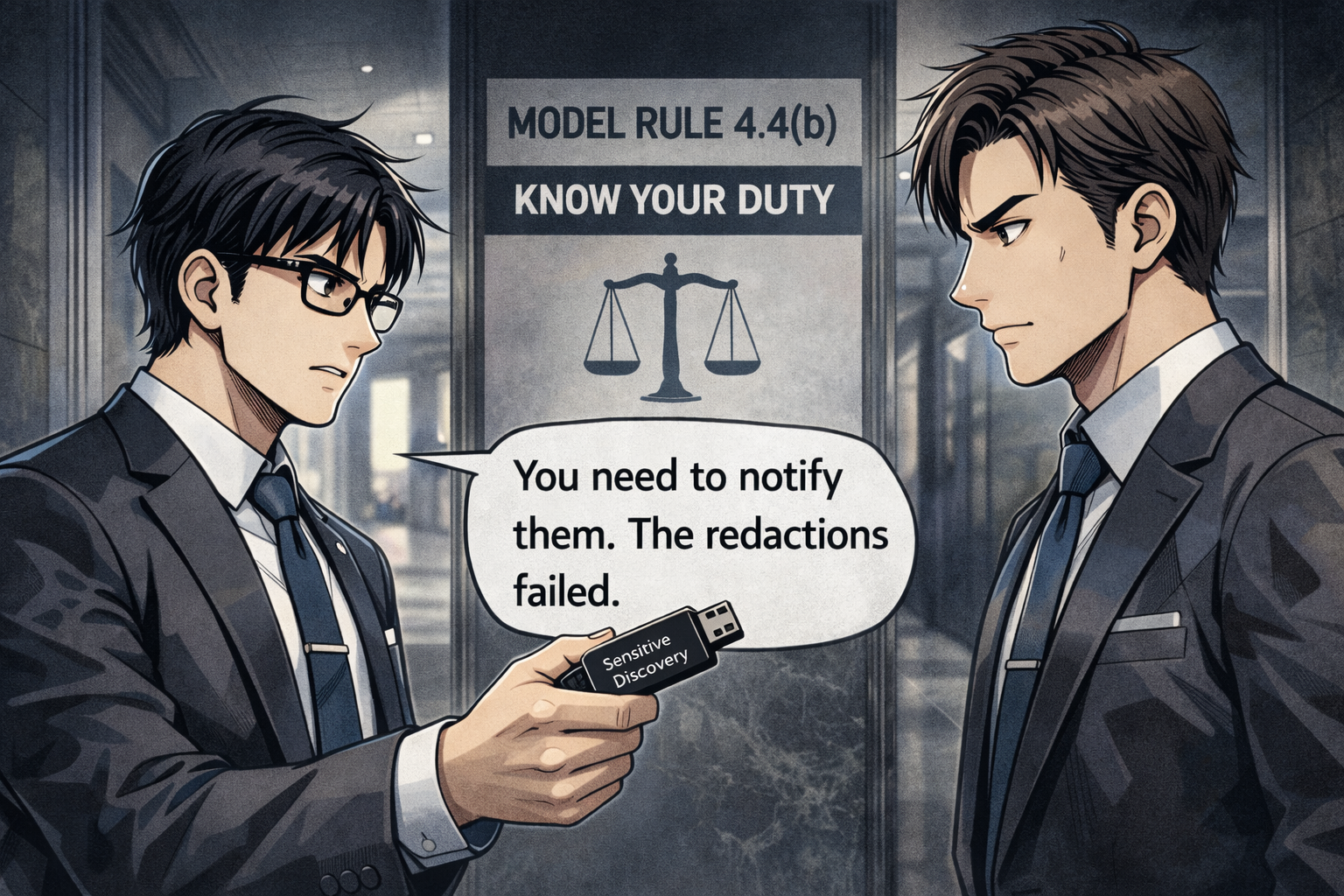

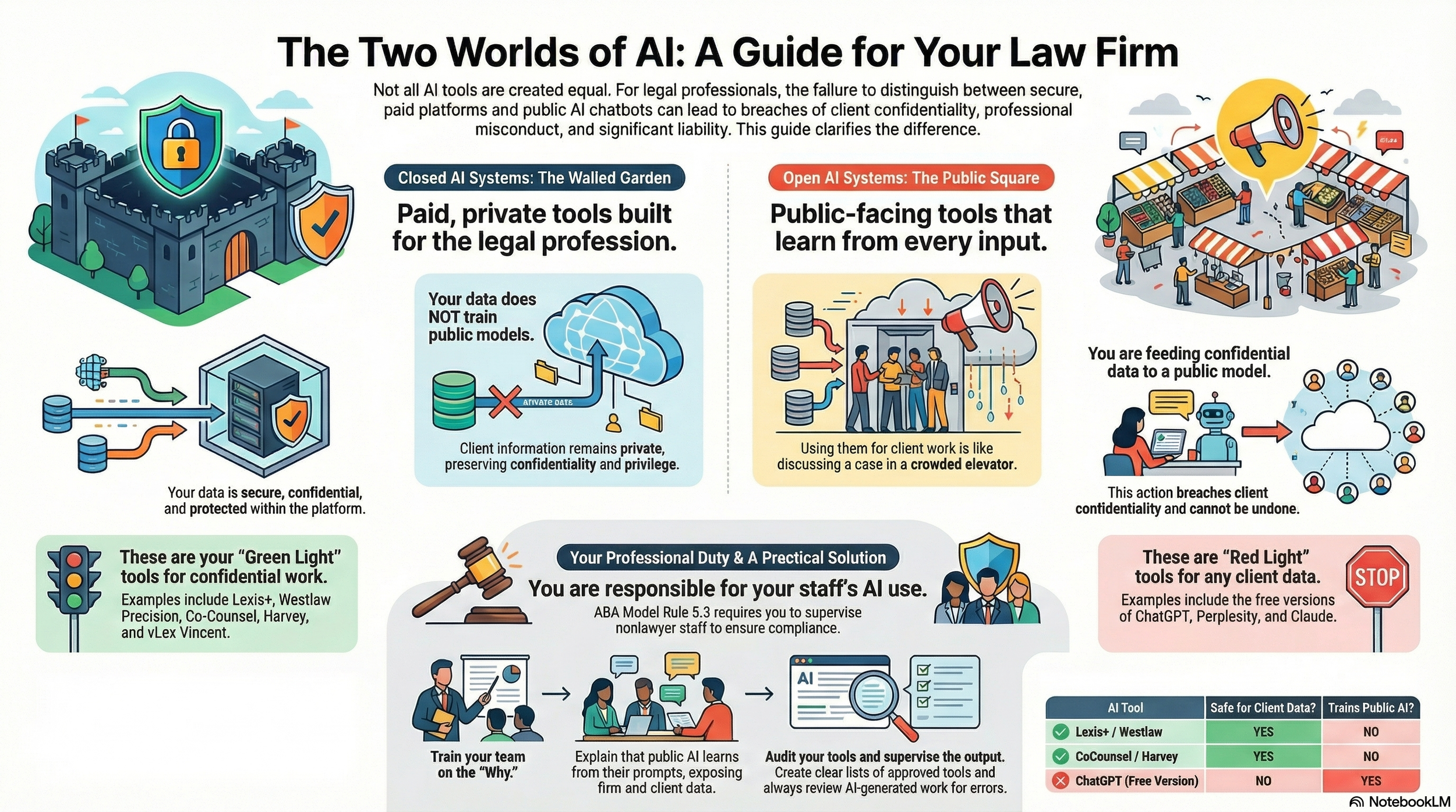

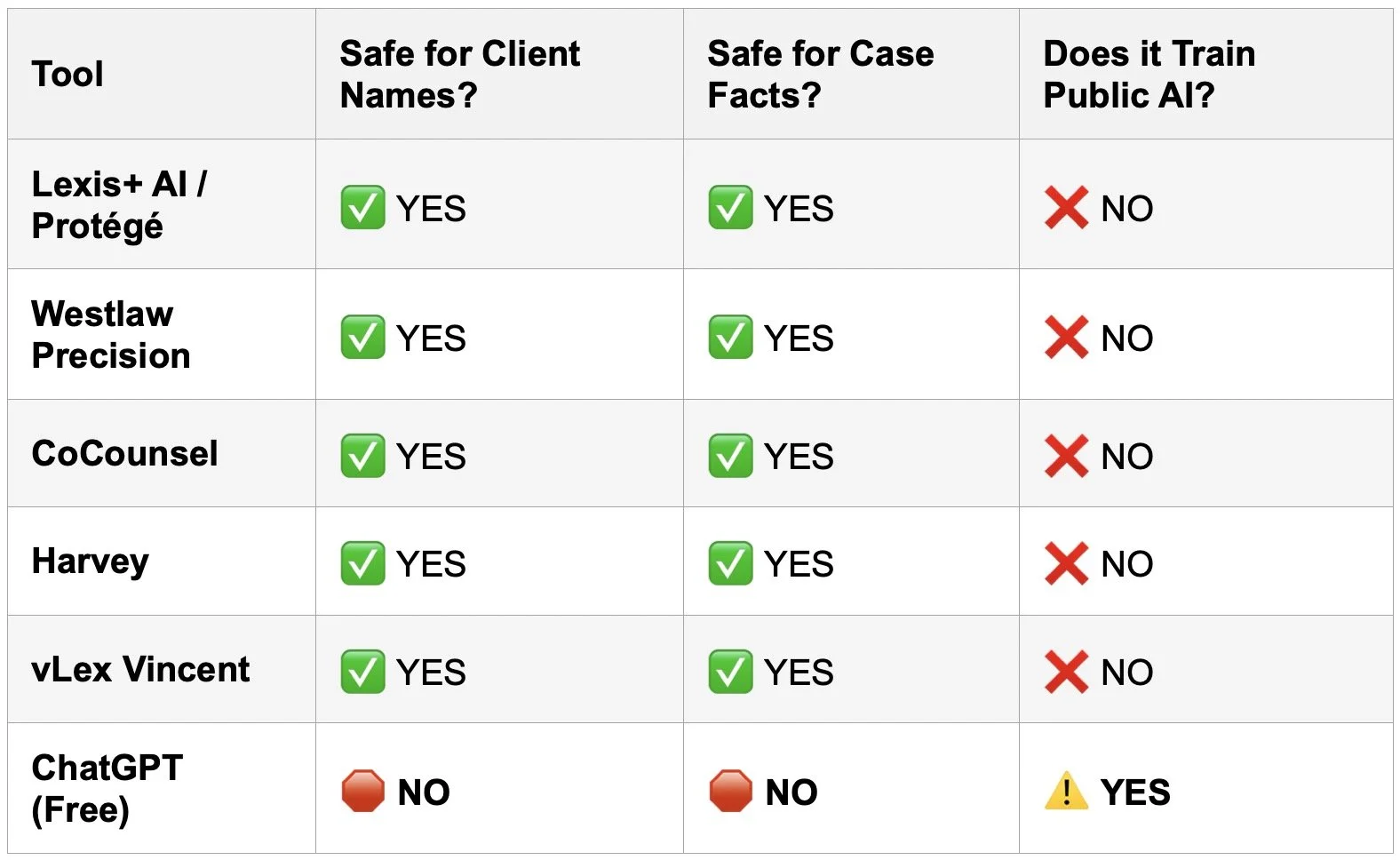

Rule 1.6 — governing confidentiality — also carries technology implications. A lawyer who fails to understand how their email system works, who stores client data on unsecured devices, or who falls victim to a phishing attack that exposes client files has potentially breached their duty of confidentiality. Rule 5.3 requires that supervisors ensure non-lawyer staff are also compliant with the Rules — and that includes how they use firm technology. The tentacles of technology competence reach throughout the Model Rules.

Conferences like TECHSHOW exist, in part, to help you satisfy these obligations in a practical, hands-on way. The ABA Law Practice Division has consistently described Techshow as an opportunity to understand the "benefits and risks" of technology — the exact language of Comment 8. This is not accidental. It is intentional alignment between the programming and your professional duties.

🚀 The Future Is Already Here — Are You Ready?

The 2026 theme — Innovation That Protects the Rule of Law — reflects something I have believed for years: technology, when adopted thoughtfully, does not undermine the legal profession. It strengthens it. AI tools are transforming how lawyers research, draft, and communicate. Wearable technology and augmented reality are beginning to reshape how we work and collaborate. Deposition technology is being revolutionized by AI-powered transcript tools and remote video platforms. None of this is science fiction. It is happening right now, in law firms across the country.

The question is not whether you will engage with these tools. The question is whether you will engage with them proactively — understanding their benefits and their risks — or reactively, scrambling to catch up after a client complaint or a disciplinary inquiry.

I am not here to alarm you. I am here to invite you. 🤝

your podcast studio set up iMpacts on you are perceived in the virtual legal landscape!

Whether you are a solo practitioner trying to figure out which AI tool is worth your subscription fee, or a partner at a mid-size firm wondering how to lead your team through a technology transition, Techshow offers you a safe, supportive, and genuinely energizing environment to learn. Most of the sessions are CLE-eligible. The vendors are accessible and eager to demonstrate — not sell. The community is collaborative.

More than four decades of working with technology and nearly 30 years of those in the legal arena have taught me one thing above all else: the lawyers who thrive are not necessarily the most tech-savvy. They are the most tech-willing — the ones who stay curious, stay engaged, and never stop learning. 💡

TECHSHOW is where that learning happens. I will see you there.

REGISTER HERE!

MTC