TSL.P Labs 🧪 Initiative: Why 96% AI Accuracy Still Fails Lawyers: Ethics, Hallucinations, and the Future of the Billable Hour ⚖️🤖

/📌 To Busy to Read This Week’s Editorial?

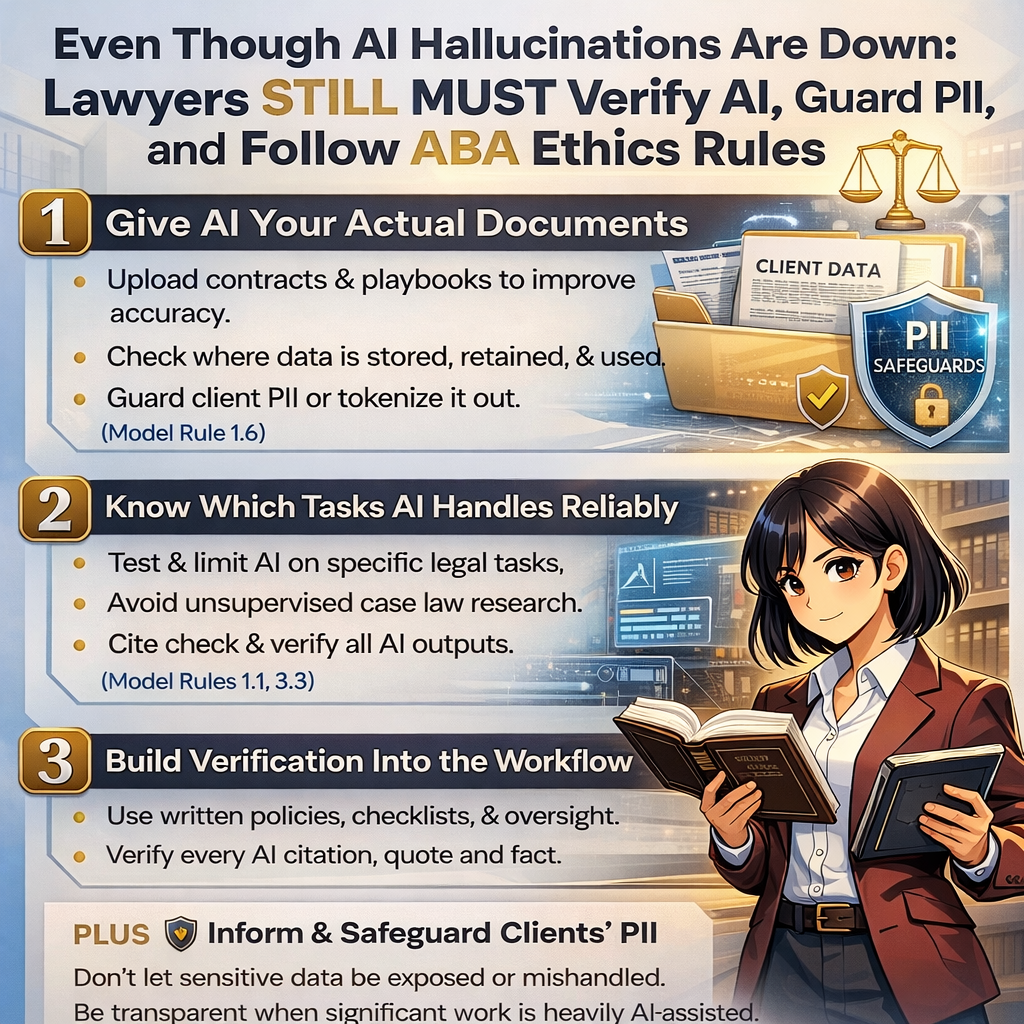

Welcome to the TSL Lab’s Initiative. 🤖 This weeks episode builds on my March 3rd, 2026, editorial “Even Though AI Hallucinations Are Down: Lawyers STILL MUST Verify AI, Guard PII, and Follow ABA Ethics Rules ⚖️🤖” is a misleading comfort blanket for lawyers, and how ABA Model Rules on confidentiality, competence, diligence, candor, supervision, and client communication must govern every AI prompt you run. Our Google LLM Notebook hosts translate the theory into practical workflows you can implement today—from document grounding and tokenization to vendor due diligence and line‑by‑line verification—so you can leverage AI confidently without sacrificing ethics, privilege, or your professional license.

You will hear how document grounding changes what LLMs actually do, why uploading active case files to cloud AI tools can quietly trigger Rule 1.6 problems, and how cross‑border data flows, vendor training rights, and retention policies can erode privilege if you do not negotiate them carefully. 🔐 We also unpack practical safeguards like tokenization, internal sandbox testing, and bright‑line “danger zones” where AI must never operate unsupervised—especially on open‑ended research, choice of law, and any task that turns statistical text into real‑world legal risk.

Finally, we confront the economic paradox: when AI can compress 100 hours of document review into seconds, but partners must still verify every line to protect their licenses, what exactly are clients paying for—and how does the billable hour survive? 💼

In our conversation, we cover the following

00:00 – Why “96% fewer hallucinations” is still not good enough in law ⚖️

01:00 – How the remaining 4% error rate can trigger malpractice, sanctions, and ethics violations

02:00 – From IT issue to ethics issue: ABA Model Rules as the real constraint on AI adoption

03:00 – Document grounding 101: turning a free‑floating LLM into a reading‑comprehension engine

04:00 – The hidden danger of “just upload the file”: how Rule 1.6 confidentiality is instantly implicated

05:00 – Cloud AI architecture, cross‑border data transfers, GDPR, and privilege risk 🌐

06:00 – Model training nightmares: when your client’s trade secrets leak back out through someone else’s prompt

07:00 – Negotiating no‑training clauses and ring‑fencing vendor data use (before you upload anything)

08:00 – Tokenization explained: turning John Doe into “Plaintiff 01” without losing legal meaning 🔐

09:00 – What AI does well today: grounded summarization, clause extraction, and playbook‑based redlines

10:00 – The “danger zone” of tasks: open‑ended research, choice of law, and abstract legal reasoning

11:00 – Phantom case law: how LLMs manufacture perfect‑looking but fake citations (and Rule 3.3 candor)

12:00 – Sandboxing AI tools internally and measuring real‑world failure rates against known outcomes 🧪

13:00 – Building bright‑line firm policies around forbidden AI use cases

14:00 – Verification as a workflow, not a suggestion: what Model Rules 5.1 and 5.3 demand from supervisors

15:00 – The efficiency paradox: when partner‑level verification erases associate‑level time savings ⏱️

16:00 – Making AI verification as routine as a conflict check in your practice

17:00 – Falling hallucination rates, rising risk: why better AI can still make lawyers more vulnerable

18:00 – Client communication under Rule 1.4: when and why clients may be entitled to know you used AI

19:00 – “You can delegate the task, not the liability”: Rule 1.2 and ultimate responsibility for AI‑assisted work

20:00 – Treating every AI prompt and ToS as a potential ethics document

📝21:00 – The existential question: if AI drafts in seconds, what exactly are clients paying lawyers for?

👉 Tune in now to learn how to stay tech‑forward without becoming the next ethics cautionary tale, and start designing AI policies that actually protect your clients, your firm, and your bar license.