TSL.P Podcast Special! Podcasting for Lawyers: The Truth Behind the Mic – ABA TECHSHOW 2026 (Special Audio‑Only Episode) 🎙️⚖️

/This special episode features the audio‑only release of an ABA TECHSHOW 2026 panel I was excited to be part of: “Podcasting for Lawyers: The Truth Behind the Mic,” with moderator Ruby Powers and fellow panelists Gyi Tsakalakis and Stephanie Everett. 🎧 Instead of our usual one‑on‑one format, you will hear a live, conference‑style conversation about how lawyers can use podcasting, video, and modern legal technology to build authority, strengthen client and referral relationships, and stay aligned with legal‑ethics and professionalism rules.

Join Ruby, Gyi, Stephanie, and me as we discuss the following three questions and more!

How can lawyers design and sustain a podcast that supports their practice goals and speaks to a clearly defined audience?

What practical tech stacks—microphones, recording platforms, hosting services, and workflow tools—are realistic for busy attorneys and legal professionals?

How do podcasting, video, and short‑form content contribute to SEO, GEO, and long‑term business development for law firms?

In our conversation, we cover the following

00:00 – Welcome to ABA TECHSHOW 2026 and introduction of the panel: Ruby Powers (moderator), Gyi Tsakalakis, Stephanie Everett, and Michael D.J. Eisenberg. 🎙️

02:00 – Each panelist explains their podcast, ideal listener, and why they chose podcasting as a medium.

06:00 – Publishing cadence: weekly, bi‑weekly, and how consistency drives listener trust and download growth.

10:00 – Adding video and YouTube to audio‑only shows and how video clips improve discovery on social media.

14:00 – DIY production vs. using producers, internal teams, or podcast networks, including time and cost trade‑offs.

18:00 – Core tech stacks in practice: microphones, Zoom, Riverside, StreamYard, Descript, Libsyn, Calendly, Buffer, and other essentials. 💻

24:00 – Guest selection, outreach, and sound checks; when to decline an appearance or reschedule due to poor audio or bad fit.

30:00 – Using podcast hosting analytics and social‑platform insights to understand who is listening and what resonates.

35:00 – Podcasting as networking and “virtual coffee”: building relationships with lawyers, experts, and vendors. ☕

40:00 – SEO and GEO benefits: how episodes create long‑tail visibility in search, and why attribution still matters.

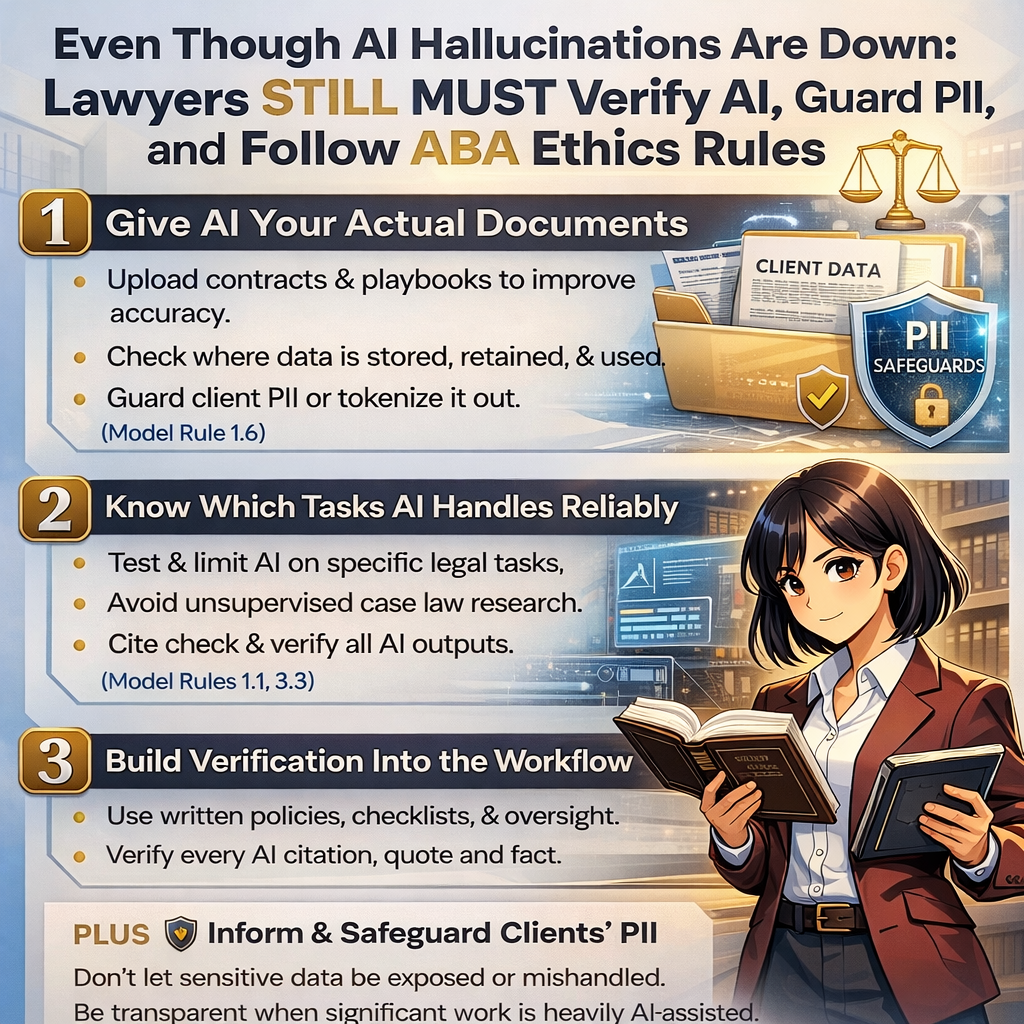

45:00 – Ethics and professionalism: confidentiality, bar‑advertising rules, disclaimers, and avoiding client‑identifying facts. ⚖️

52:00 – Final advice for lawyers on the fence about starting a podcast and how to improve with each episode instead of waiting for perfection.

RESOURCES

Connect with the panel

ABA TECHSHOW 2026 session: “Podcasting for Lawyers: The Truth Behind the Mic” – https://www.techshow.com/sessions/podcasting-for-lawyers-the-truth-behind-the-mic/

Gyi Tsakalakis – Lunch Hour Legal Marketing – https://lunchhourlegalmarketing.com

Ruby Powers – Power Strategy Group – https://powersstrategygroup.com 😊

Stephanie Everett – Lawyerist / The Lawyerist Podcast – https://lawyerist.com

Mentioned in the episode (non‑hardware / non‑software)

ABA TECHSHOW – https://www.techshow.com

Clio Cloud Conference – https://www.cliocloudconference.com

The Lawyers’ Guide to Podcasting by Michael D.J. Eisenberg – https://www.amazon.com/dp/B0GGX32DZH?ref=cm_sw_r_ffobk_cp_ud_dp_03CBA2XX7NC03AP4K2DK&ref_=cm_sw_r_ffobk_cp_ud_dp_03CBA2XX7NC03AP4K2DK&social_share=cm_sw_r_ffobk_cp_ud_dp_03CBA2XX7NC03AP4K2DK&bestFormat=true 📘

Podcast Movement - https://podcastmovement.com/

Podfest Expo - https://podfestexpo.com/

Power Up Your Practice by Ruby Powers – https://powerupyourpractice.com/

Prenups.com – https://www.prenups.com

Hardware mentioned in the conversation

PlexiCam camera mount – https://www.plexicam.com

Shure MV7 microphone – https://www.shure.com/en-US/products/microphones/mv7 🎙️

Software & Cloud Services mentioned in the conversation

Buffer – https://buffer.com

Calendly – https://calendly.com

Descript – https://www.descript.com

Facebook – https://www.facebook.com

GarageBand – https://www.apple.com/mac/garageband

Libsyn (podcast hosting) – https://libsyn.com

LinkedIn – https://www.linkedin.com

Riverside – https://riverside.fm

StreamYard – https://streamyard.com

YouTube – https://www.youtube.com

Zoom – https://zoom.us