MTC: AI Won’t Replace Solo and Small-Firm Lawyers — It Will Supercharge Them ⚖️🤖

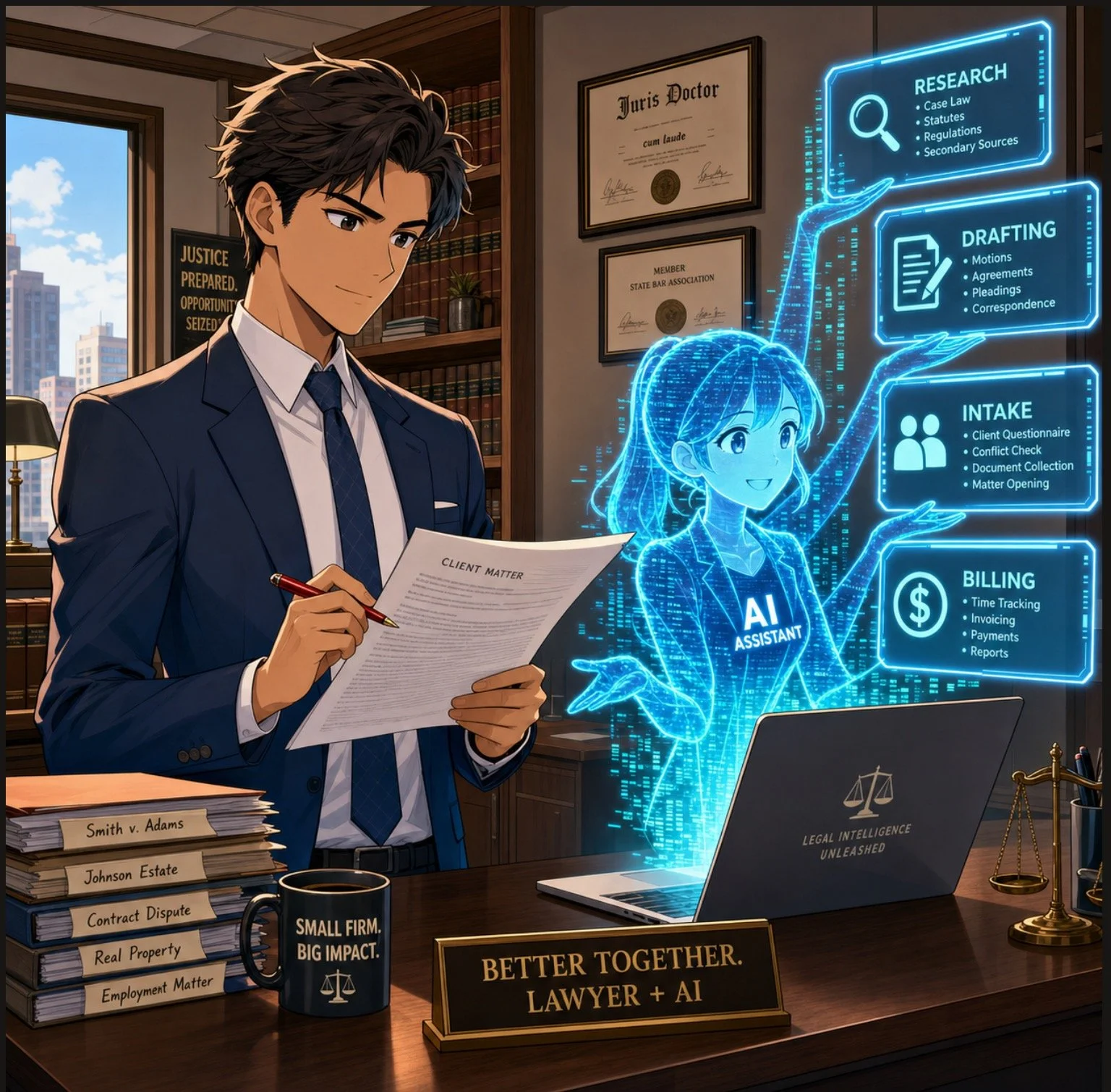

/Solo lawyers can use artificial intelligence as a virtual associate to handle legal research, drafting, intake, and billing in a modern small law firm ⚖️🤖

If you run a solo or small-to-medium firm, you’ve probably heard the predictions: AI will automate legal tasks in “12 to 18 months” or replace traditional lawyers entirely by 2035. Those headlines make great clickbait, but they miss what is actually happening on the ground in smaller practices. AI is not wiping out solo and small-firm lawyers; it is changing the mix of tasks we do — and creating more opportunities for us if we adopt it intentionally and ethically.

In a recent Washington Post opinion, Damien Charlotin argues that AI won’t replace lawyers. It will create more of them. His logic is especially important for solos and small firms. He describes legal jobs as “bundles of tasks,” many of which are tightly linked and not easily peeled apart for automation. If you’ve ever juggled intake, research, drafting, negotiation, and billing in a single day, you know exactly what that tight bundle feels like. AI is about to start pulling on pieces of that bundle — and your job is to decide how to rebundle your work in a way that serves clients, protects ethics, and keeps your business healthy. ⚖️🤖

Why Solo and Small Firms Should Ignore the Doom Headlines 😅

Charlotin points out that lawyers have never been more numerous in the United States, with law school applications rising and record-high employment in bar-required jobs. That’s happening at the same time as AI hype, which should tell you something: the profession is not collapsing.

For solos and small firms, the bigger risk is not AI replaces me, but AI-literate competitors out-serve my clients. Larger firms may have innovation teams and internal IT, but you have agility and direct control over your workflows. If you can use AI to shave hours off routine tasks — and reinvest that time into client counseling, business development, or flat-fee offerings — you can turn AI from a threat into a differentiator. As I often say on The Tech-Savvy Lawyer.Page podcast, AI is the junior associate you don’t have to hire, but still have to supervise.

Your Practice as a “Tight Bundle” of Tasks 🧩

Charlotin’s “bundles of tasks” concept is tailor-made for solo and small-firm reality. In big firms, tasks can be split across teams; in smaller shops, you wear most of the hats. Research, drafting, strategy, client communication, and billing are often intertwined in a single matter.

For experienced lawyers, Charlotin notes, “doing legal research and evaluating an argument are … often the same mental activity” — we check the argument by writing it. If you offload only the writing to AI, verification becomes a separate, deliberate act that takes time, and if you skip it, you risk sanctions for hallucinated filings. This is why I push solo and small-firm lawyers to treat AI as an assistant that drafts and summarizes, while you retain control over the analysis and final product.

Lessons from E-Discovery for Small Practices 📂➡️📈

Charlotin likens the current AI hype to the e-discovery wave more than a decade ago. Back then, headlines like those from The New York Times predicted “Armies of Expensive Lawyers, Replaced by Cheaper Software.” What actually happened? The volume of discoverable material exploded; the tools became part of practice; and lawyers moved into new roles managing, interpreting, and litigating around that information.

That same Jevons paradox — cheaper processes leading to more usage — is already playing out in tools marketed to solo and small firms. AI-assisted drafting and research platforms now make it viable for smaller shops to handle matters that previously required big-firm staffing, and to offer more predictable pricing without cutting quality. Cheaper legal work often means more legal work — especially for clients who previously couldn’t afford you.

ABA Model Rule 1.1: Competence for Lean Teams 📚

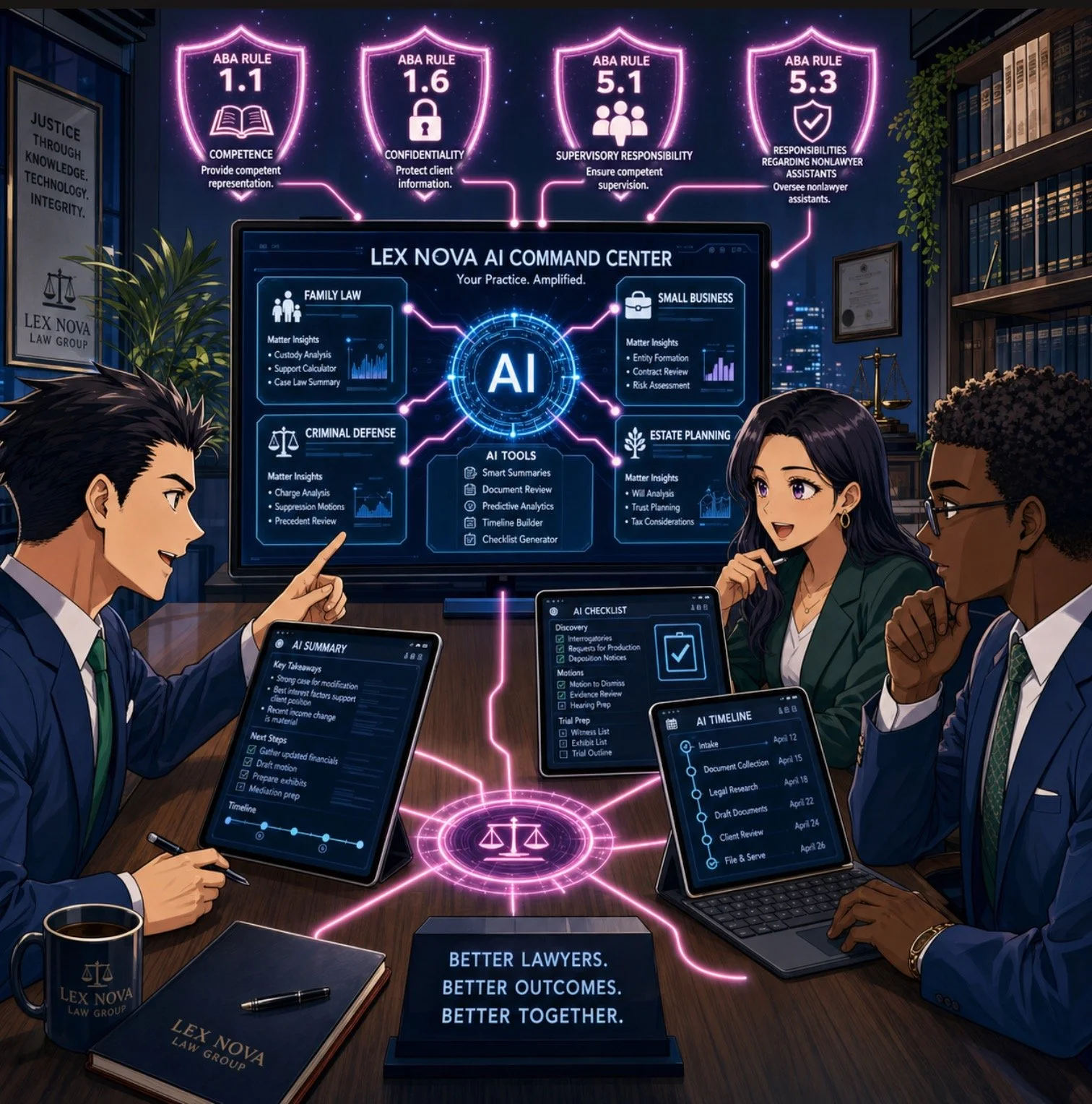

Small law firm team using legal AI tools to improve collaboration, client service, and ABA-compliant workflows across a lean practice 👩⚖️👨⚖️💻.

For solos and small- to medium-sized firms, ABA Model Rule 1.1 on competence is both a challenge and an opportunity. It requires you to understand “the benefits and risks associated with relevant technology,” including AI. But unlike big firms, you can’t delegate that understanding to an IT department or an internal AI committee; you are the committee.

Practically, that means you need at least a working grasp of what your chosen AI tools do, how they handle data, and where they fit in your workflows. You don’t need to run every experiment at once. Start with one or two high-impact areas — say, summarizing long PDFs, generating first drafts of routine emails, or creating checklists from statutes or rules — and build from there. Competence for solo and small-firm lawyers is not about chasing every new feature; it’s about picking the right tools for your practice and using them deliberately.

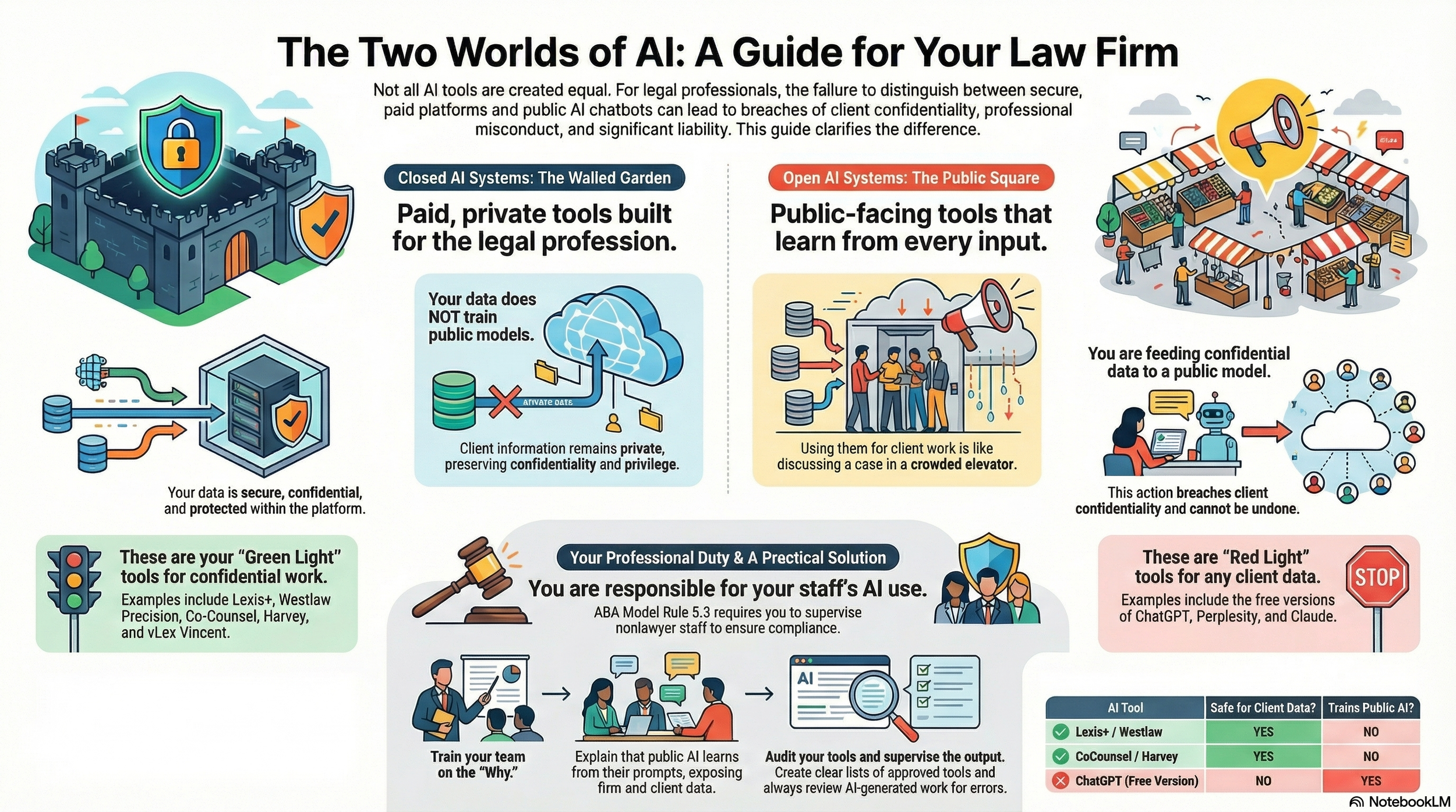

Rules 5.1 and 5.3: Supervision When “You Are the Management” 👥🤖

You might think Rules 5.1 and 5.3 (supervision of lawyers and nonlawyers) are big-firm problems. They’re not. If you have even one staff member, contract attorney, or virtual assistant, you are responsible for how they use AI. And even if you’re truly solo, you’re still responsible for supervising the AI tools you deploy as if they were a nonlawyer assistant.

For small practices, the most practical move is a simple written AI policy, even if it’s a one-page document:

Which tasks can use AI (e.g., research assistance, first-draft documents);

Which tasks require heightened review (e.g., anything filed with a court);

Which tasks are off-limits (e.g., unsupervised client advice, sensitive fact patterns pasted into consumer chatbots).

As discussed both in Charlotin’s piece and in bar guidance for smaller firms, formal policies help you avoid ad hoc, inconsistent AI use that could jeopardize client confidentiality or court obligations.

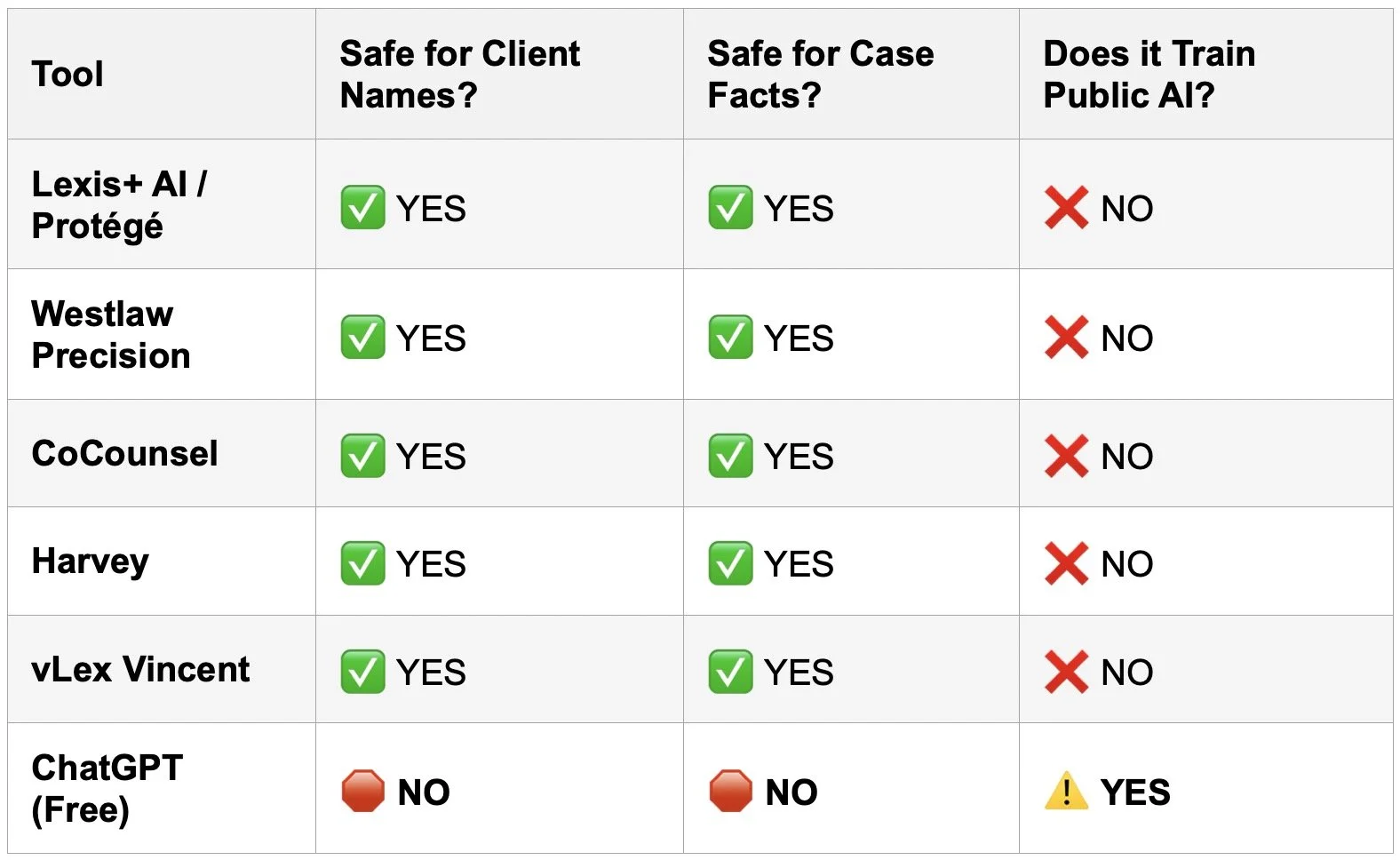

Rule 1.6 Confidentiality: Cloud Tools on a Budget 🔐

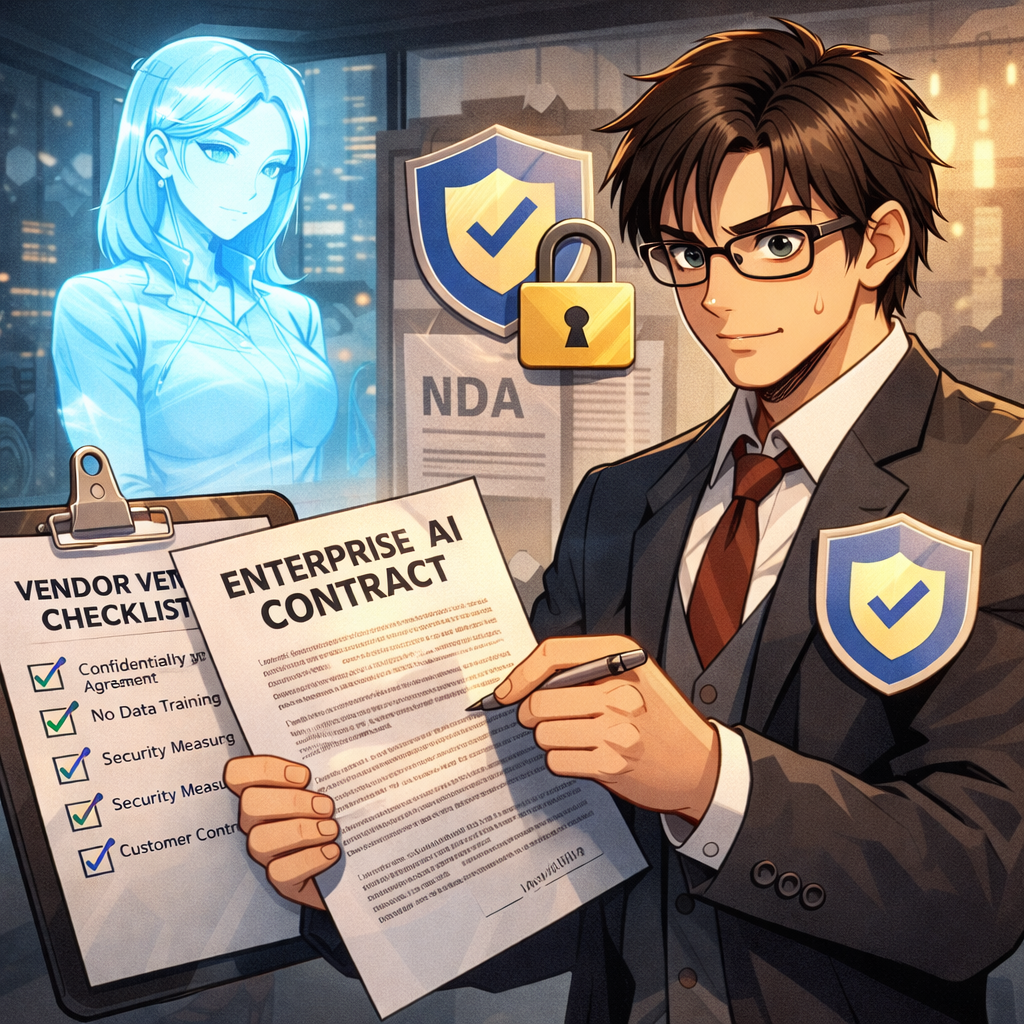

Model Rule 1.6 on confidentiality doesn’t change just because you’re a small shop — but your margin for error is thinner. Many solos and small firms rely on cloud-based tools because they can’t host their own infrastructure. That’s fine, as long as you are careful.

Before pasting client facts into an AI tool, you must know whether it stores or reuses data, whether it trains on your inputs, and whether there’s an option for a “no training” or “enterprise” mode. When in doubt, prefer AI features built into reputable legal platforms (research tools, practice management systems, document automation suites) with clear confidentiality commitments, rather than generic consumer apps. On The Tech-Savvy Lawyer.Page, I hammer this point because solos cannot absorb the cost of a major data mishap the way some larger organizations can.

Legislative Inflation and Niche Opportunities for Smaller Firms 📜📈

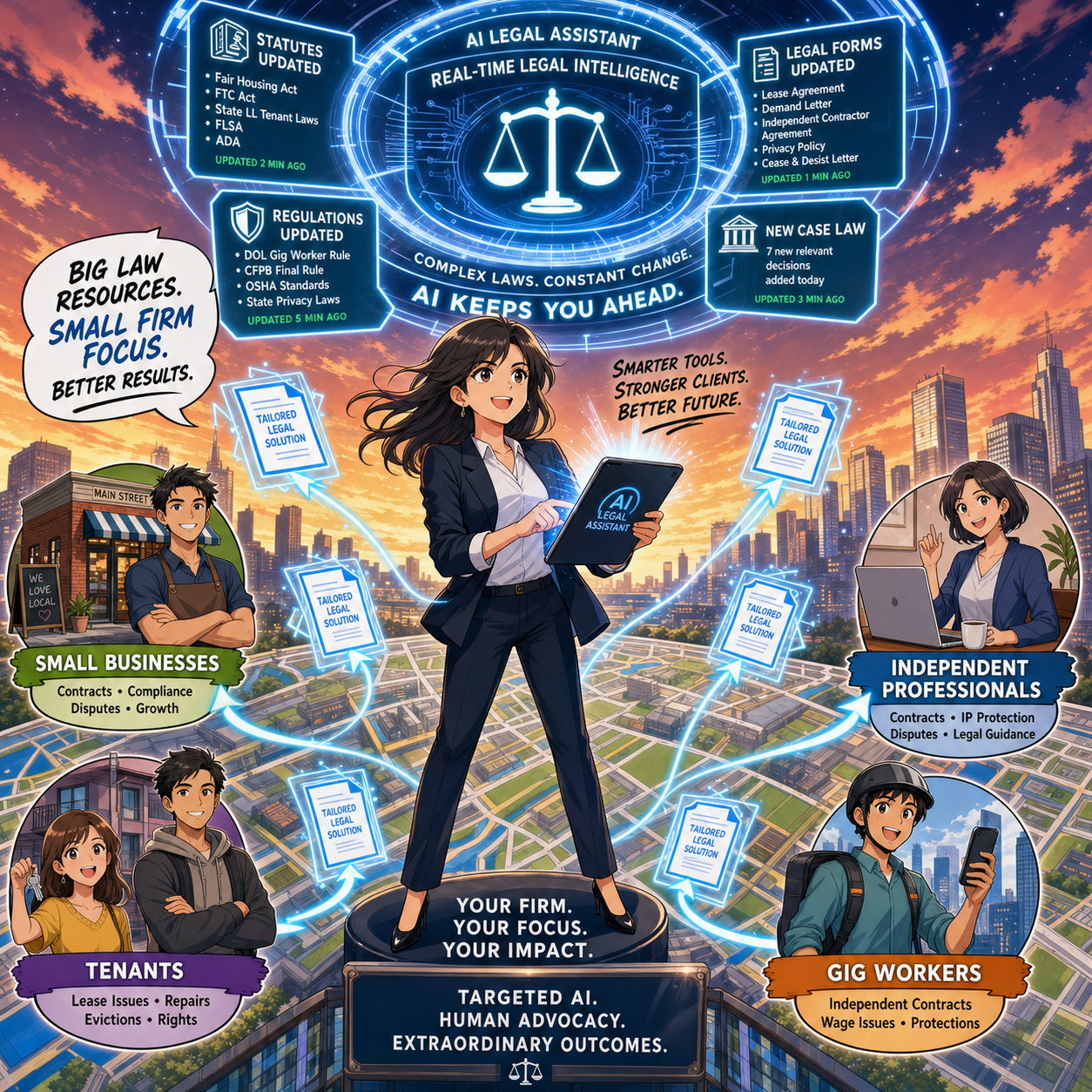

Charlotin notes that every jurisdiction is “afflicted by legislative inflation” — more rules, more norms, more regulations. That means more interpretation, more disputes, more filings, and more need for lawyers. For solos and small-to-medium firms, this is an opportunity to carve out narrow niches and use AI to keep up with complex, evolving regimes that might otherwise be out of reach.

An AI-enabled solo can monitor regulatory changes, generate quick client alerts, and update templates far faster than before. Combined with targeted content marketing and SEO, this makes it possible to dominate specific micro-niches without a big marketing budget — something I frequently discuss on The Tech-Savvy Lawyer.Page when we talk about modern business development.

Entry-Level Work and the Solo/Small Pyramid 🧑🎓➡️⚖️

a Small-firm lawyer can use AI-powered legal technology to serve niche clients, track changing regulations, and deliver efficient legal services across a local market 🎯⚖️

Charlotin flags a serious concern: AI may change entry-level work. For big firms, that means rethinking associate leverage. In smaller firms, it means you may hire differently — or delay that first hire because AI picks up some of the routine drafting and research.

But Charlotin also notes that young lawyers are hired for reasons beyond their marginal drafting value — future partnership, signals to clients, bench strength for unpredictable surges. The same is true for small and mid-size firms. AI can handle some grunt work, but it can’t attend a community event, build a local reputation, or bring in referrals. If you use AI to free juniors from the most repetitive tasks, you can push them earlier into client-facing and business-building roles, which is exactly where smaller firms thrive.

Reorganization, Not Replacement — Especially for You 🔄

Charlotin closes by emphasizing that while the profession will look different in 2035, the lawyer is here to stay, and there will likely be more lawyers, not fewer. They will use AI — “they would be fools not to” — and they will charge for that value.

For solo and small-to-medium firms, the reorganization is already underway:

Routine drafting and research shift toward AI-assisted workflows.

Verification, judgment, and client counseling become even more central.

Niche expertise, responsiveness, and pricing flexibility become your competitive edge.

If you treat AI as a core part of your toolkit — governed by the ABA Model Rules and aligned with your business goals — you must position your firm not just to survive the AI wave, but to ride it. ⚖️🤖

Its been said many times by myself and others, lawyers must embrace AI into their practice of law or be left behind by those who do!