TSL.P EP# 132 (Special Episode): AI, Deepfakes, and Metadata: Guest-Hosting Capital University Law School’s First Law Library Podcast Club with Professor Jennifer Wondracek 🎙️⚖️

/🎙️ This week, we have something special for you. Your The Tech-Savvy Lawyer.Page blogger and podcaster, Michael D.J. Eisenberg, was invited back to his alma mater, Capital University Law School, to guest host their very first Podcast Club — a live, recorded podcast conversation featuring real law students, real questions, and real talk. Moderated alongside Professor Wondracek, this is an unscripted, in-the-moment discussion — not a produced studio episode, not a rehearsed explainer — just an honest, practitioner-led conversation about the issues shaping the legal profession today. 🎓 We think that's exactly what makes it worth your time. 🛡️⚖️

In our conversation, we cover the following:

[00:00] 🎬 Welcome & Introductions — Professor Raik introduces Michael D.J. Eisenberg; Michael shares his background representing veterans before the VA and his work at The Tech-Savvy Lawyer.Page, including his Amazon #1 bestselling book The Lawyer's Guide to Podcasting

[01:00] 🕵️ What Is a Deepfake? — Defining deepfakes and the real-world Bethesda incident where a fabricated video triggered a police raid and wasted critical law enforcement resources

[02:30] ⚖️ Deepfakes in Legal Practice — The serious professional responsibility consequences when attorneys introduce fake or AI-generated evidence into a trial or negotiation — and why "I didn't know" won't always protect you

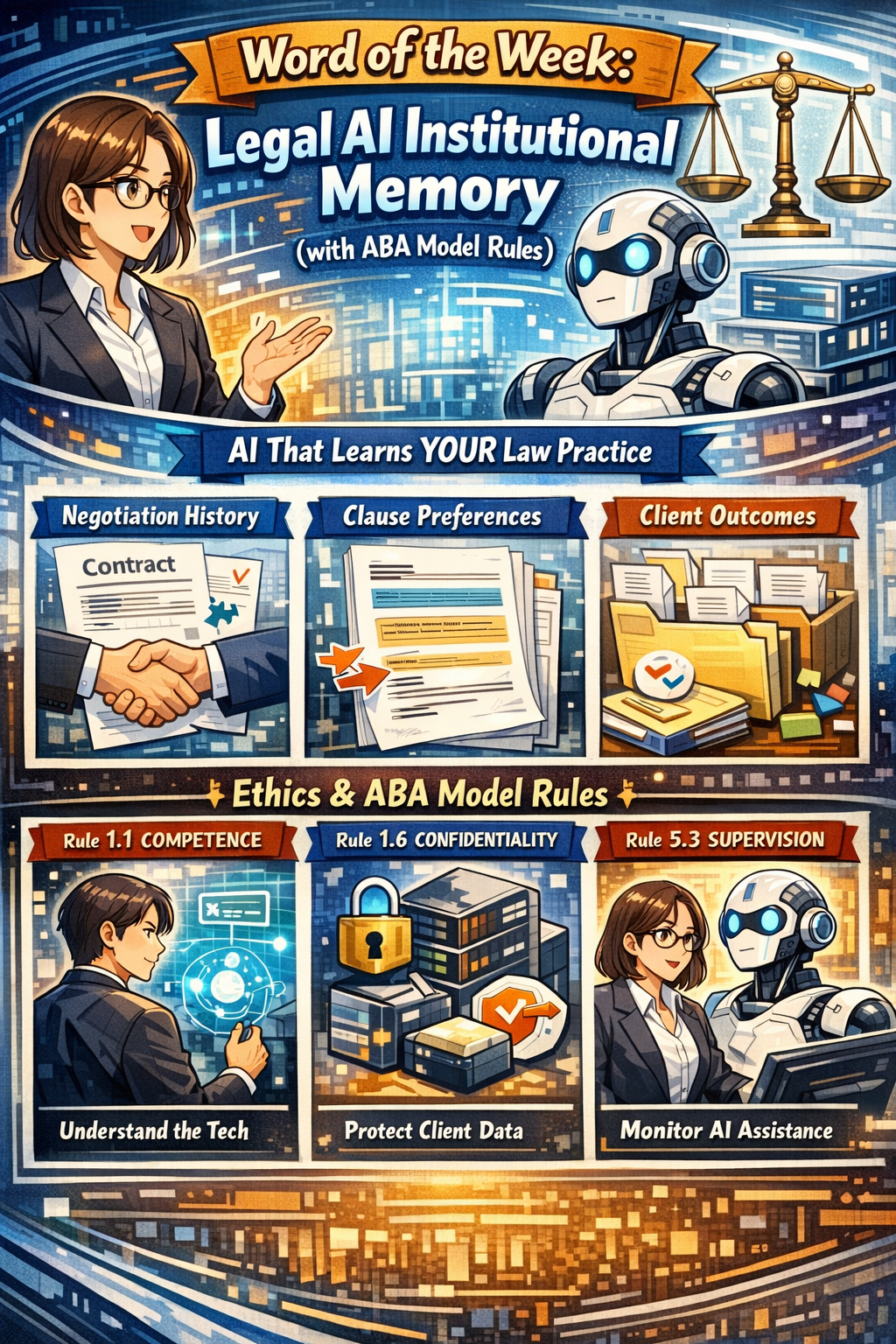

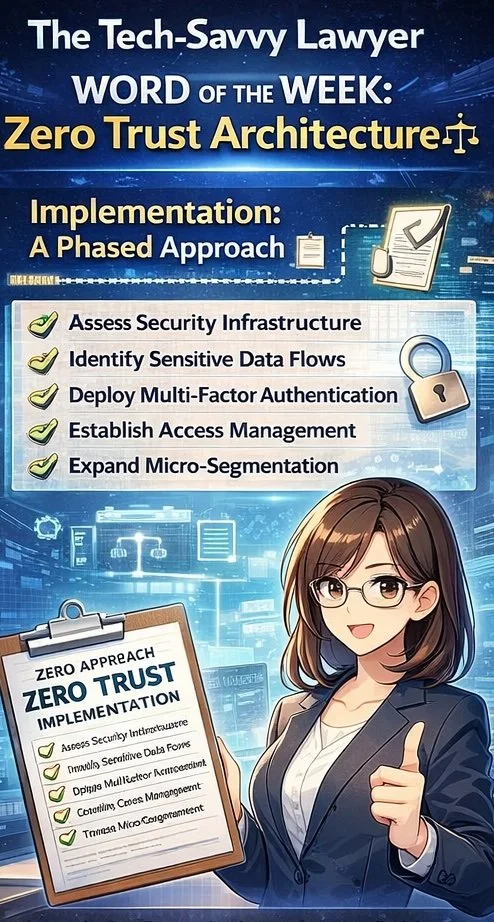

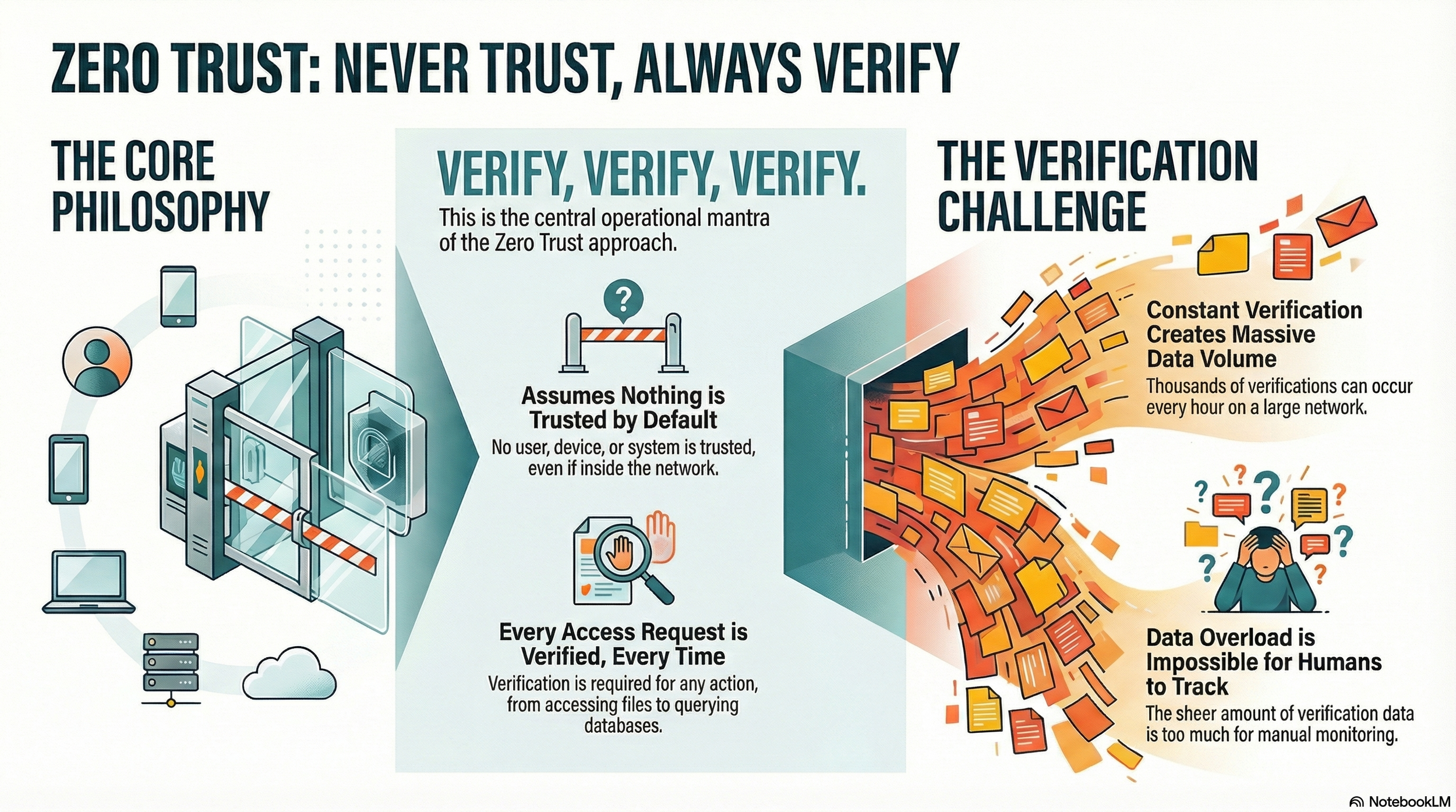

[03:00] 📋 ABA Model Rule 1.1 — Competence & Comment 8 — Why technology competence is an ethical obligation, not optional — and what "competent use of technology" actually means for your daily practice

[05:00] 📶 Public Wi-Fi & VPN Security — The real dangers of using public Wi-Fi at airports, coffee shops, and on planes without a VPN, especially when accessing client portals, trust accounts, or confidential client communications

[05:45] 🔐 VPN Recommendations — NordVPN vs. ExpressVPN; why the right VPN matters for protecting your clients and your license

[07:00] 🔍 How to Detect Deepfakes in Evidence — Reviewing file metadata, spotting visual anomalies, and the Mendones v. Cushman & Wakefield case (Cal. Super. Ct. 2025) where Judge Victoria Kolakowski spotted deepfake videos — and imposed terminating sanctions

[08:30] 📜 ABA Model Rule 3.3 — Candor to the Tribunal — Your duty of honesty to the court and the compounding risks of unknowingly submitting falsified AI-generated evidence

[09:30] 🧑⚖️ ABA Model Rule 8.4 — Dishonesty, Fraud, Deceit & Misrepresentation — How these rules can stack with Rule 3.3 to multiply your ethical exposure

[10:30] 🖼️ Live Demo: Accessing File Metadata — A real-time screen share showing how to right-click and access image properties, including GPS coordinates, camera settings, file timestamps, and modification history — using a photo taken right at Capital University Law School

[14:00] 🗂️ Metadata Scrubbing — Why some law firms strip metadata from outgoing emails and documents, and why "scrubbed" evidence should raise red flags during discovery

[17:30] 🛡️ Five Basic Safeguards for Legal Professionals in the AI Era:

Invest in ongoing education — CLEs, podcasts, blogs

Know and follow your office's technology protocols

Be transparent with clients about your use of AI — in your engagement letters and contracts

Update your engagement letters and retainer agreements now

Stay alert to the rapidly changing AI landscape — ignoring it is not an option

[20:00] 💬 Student Q&A — George's question: Does the ethical obligation to review metadata create a resource gap between large firms and solo practitioners? What AI tools can help level the playing field?

[22:00] ⚠️ United States v. Heppner (S.D.N.Y. 2026) — The landmark federal ruling that AI-generated documents used with consumer AI tools are NOT protected by attorney-client privilege or work product doctrine — and why your choice of AI platform is now an ethics decision

[23:30] 🔒 Consumer AI vs. Secure Legal AI Platforms — The critical difference between free and low-cost consumer AI accounts and enterprise-grade legal AI tools with zero-data-retention contracts

[25:30] 📚 LexisNexis Protégé & Secure AI — How LexisNexis's zero-retention, end-to-end encrypted AI platform — including access to ChatGPT and Claude — lets attorneys use AI ethically without jeopardizing client data

[27:00] 💾 Prompt Discoverability — Why your AI prompts may be subpoenaed in malpractice claims or bar investigations, and why documenting and downloading your AI usage history matters

[28:30] 📁 Cassia's Question — Documenting AI research and adopting a random sampling strategy for metadata review: practical approaches to demonstrating "reasonable" due diligence

[30:00] 🗃️ eDiscovery Tools for Metadata at Scale — Relativity, Disco, and how these platforms help even resource-limited firms surface metadata anomalies across large document sets

[33:00] 📰 Current Awareness Resources — Where to stay current on legal tech: law.com, bar association newsletters, The Tech-Savvy Lawyer.Page blog and podcast, CLEs, and legal listservs

[33:30] 🚨 Hallucinated AI Citations & Real Consequences — Mata v. Avianca, Wadsworth v. Walmart (Morgan & Morgan pro hac vice revoked), the Chicago firm dissolution case — and why citation-checking is now a non-negotiable litigation skill

[37:00] 🎲 Book Giveaway — Rolling the virtual dice; congratulations to the winner of Michael's book, The Lawyer's Guide to Podcasting!

[38:00] 🗓️ Upcoming Events — Michael joins the Capital Law Spring Summit/Bootcamp in April; dinner with students at The Red Door Tavern

Resources

Connect with Professor Jennifer Wondracek:

E-Mail: jwondracek@law.capital.edu

LinkedIn: https://www.linkedin.com/in/jennifer-wondracek

📌 Mentioned in the Episode

🏛️ ABA Model Rules

ABA Model Rule 1.1 (Competence), Comment 8 (Technology Competence): https://www.americanbar.org/groups/professional_responsibility/publications/model_rules_of_professional_conduct/rule_1_1_competence/

ABA Model Rule 3.3 — Candor to the Tribunal: https://www.americanbar.org/groups/professional_responsibility/publications/model_rules_of_professional_conduct/rule_3_3_candor_toward_the_tribunal/

ABA Model Rule 8.4 — Misconduct (Dishonesty, Fraud, Deceit, Misrepresentation): https://www.americanbar.org/groups/professional_responsibility/publications/model_rules_of_professional_conduct/rule_8_4_misconduct/

⚖️ Case Law

Mata v. Avianca, Inc. — The landmark hallucinated AI citation case (S.D.N.Y. 2023): https://www.courtlistener.com/docket/17271435/mata-v-avianca-inc/

Mendones v. Cushman & Wakefield, Inc., No. 23CV028772 (Cal. Super. Ct. Alameda Cnty., Sept. 9, 2025) — Deepfake terminating sanctions: https://ediscoverytoday.com/2025/09/25/deepfake-videos-and-images-lead-to-terminating-sanctions-ediscovery-case-law/

United States v. Heppner, 25-cr-00503-JSR (S.D.N.Y. Feb. 10, 2026) — AI documents not protected by privilege: https://www.insideprivacy.com/artificial-intelligence/ai-and-legal-privilege-key-takeaways-from-us-v-heppner/

Wadsworth v. Walmart — Morgan & Morgan pro hac vice revocation over AI citations: (search Westlaw/Lexis for current citation)

🤔 Other

🎓 Capital University Law School — Law Library Podcast Club: https://law-capital.libguides.com/c.php?g=1168974&p=11364939

📰 Law.com — Legal news and current awareness: https://www.law.com

🏛️ Ohio State Bar Association — Ethics Hotline: https://www.ohiobar.org

💻 Hardware Mentioned in the Conversation

📱 Google Pixel 3 (used to demonstrate photo metadata and GPS geotagging in the live demo): https://store.google.com/us/category/phones

☁️ Software & Cloud Services Mentioned in the Conversation

🤖 ChatGPT (OpenAI) — Consumer & Teams AI platforms: https://openai.com/chatgpt

🤖 ChatGPT Teams — Enhanced security tier: https://openai.com/chatgpt/team

🤖 Claude (Anthropic) — AI assistant: https://claude.ai

📊 CNET — VPN rankings: https://www.cnet.com/tech/services-and-software/best-vpn/

🗃️ CS Disco — AI-powered eDiscovery platform: https://www.csdisco.com

🔐 ExpressVPN — VPN service (#1 on CNET): https://www.expressvpn.com

⚖️ LexisNexis Protégé — Legal AI platform with zero-retention, encrypted access to ChatGPT and Claude: https://www.lexisnexis.com/en-us/products/lexis-plus-ai.page

🖥️ macOS Get Info & File Tags — Native macOS metadata tool: https://support.apple.com/guide/mac-help

🖥️ Microsoft Windows File Properties (right-click → Properties → Details) — Native OS metadata viewer: https://support.microsoft.com/en-us/windows

🔐 NordVPN — VPN service (#1 on Tom's Guide): https://nordvpn.com

🤖 Perplexity AI — AI research assistant: https://www.perplexity.ai

🗃️ Relativity — eDiscovery platform with metadata analysis: https://www.relativity.com

📊 Tom's Guide — VPN rankings: https://www.tomsguide.com/best-picks/best-vpn

⚖️ Westlaw — Legal AI research vault: https://legal.thomsonreuters.com/en/products/westlaw