MTC: Judicial Warnings - Courts Intensify AI Verification Standards for Legal Practice ⚖️

/Lawyers always need to check their work - AI is not infalable!

The legal profession faces an unprecedented challenge as federal courts nationwide impose increasingly harsh sanctions on attorneys who submit AI-generated hallucinated case law without proper verification. Recent court decisions demonstrate that judicial patience for unchecked artificial intelligence use has reached a breaking point, with sanctions extending far beyond monetary penalties to include professional disbarment recommendations and public censure. The August 2025 Mavy v. Commissioner of SSA case exemplifies this trend, where an Arizona federal judge imposed comprehensive sanctions including revocation of pro hac vice status and mandatory notification to state bar authorities for fabricated case citations.

The Growing Pattern of AI-Related Sanctions

Courts across the United States have documented a troubling pattern of attorneys submitting briefs containing non-existent case citations generated by artificial intelligence tools. The landmark Mata v. Avianca case established the foundation with a $5,000 fine, but subsequent decisions reveal escalating consequences. Recent sanctions include a Wyoming federal court's revocation of an attorney's pro hac vice admission after discovering eight of nine cited cases were AI hallucinations, and an Alabama federal court's decision to disqualify three Butler Snow attorneys from representation while referring them to state bar disciplinary proceedings.

The Mavy case demonstrates how systematic citation failures can trigger comprehensive judicial response. Judge Alison S. Bachus found that of 19 case citations in attorney Maren Bam's opening brief, only 5 to 7 cases existed and supported their stated propositions. The court identified three completely fabricated cases attributed to actual Arizona federal judges, including Hobbs v. Comm'r of Soc. Sec. Admin., Brown v. Colvin, and Wofford v. Berryhill—none of which existed in legal databases.

Essential Verification Protocols

Lawyers if you fail to check your work when using AI, your professional career could be in jeopardy!

Legal professionals must recognize that Federal Rule of Civil Procedure 11 requires attorneys to certify the accuracy of all court filings, regardless of their preparation method. This obligation extends to AI-assisted research and document preparation. Courts consistently emphasize that while AI use is acceptable, verification remains mandatory and non-negotiable.

The professional responsibility framework requires lawyers to independently verify every AI-suggested citation using official legal databases before submission. This includes cross-referencing case numbers, reviewing actual case holdings, and confirming that quoted material appears in the referenced decisions. The Alaska Bar Association's recent Ethics Opinion 2025-1 reinforces that confidentiality concerns also arise when specific prompts to AI tools reveal client information.

Best Practices for Technology Integration 📱

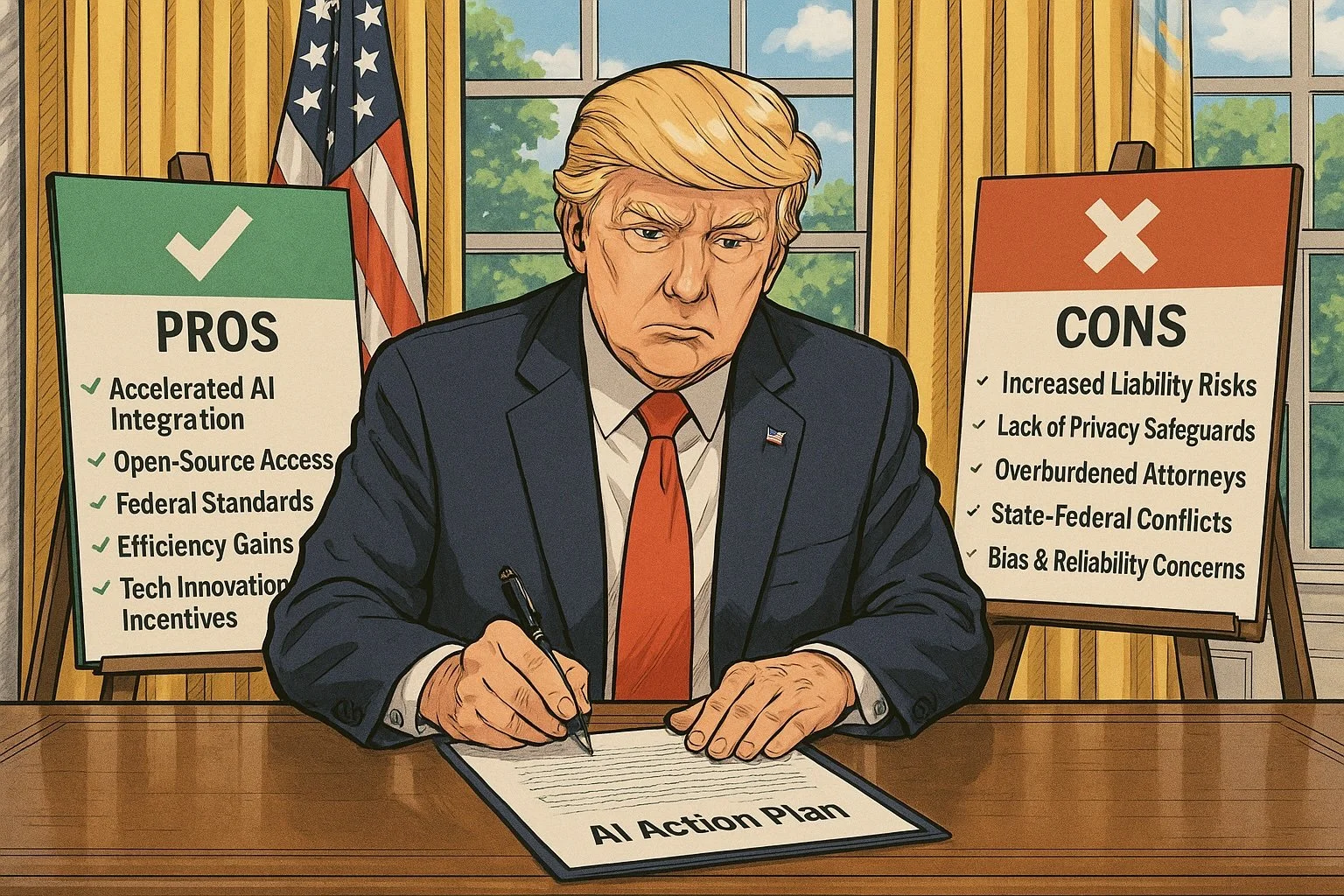

Technology-enabled practice enhancement requires structured verification protocols. Successful integration involves implementing retrieval-based legal AI systems that cite original sources alongside their outputs, maintaining human oversight for all AI-generated content, and establishing peer review processes for critical filings. Legal professionals should favor platforms that provide transparent citation practices and security compliance standards.

The North Carolina State Bar's 2024 Formal Ethics Opinion emphasizes that lawyers employing AI tools must educate themselves on associated benefits and risks while ensuring client information security. This competency standard requires ongoing education about AI capabilities, limitations, and proper implementation within ethical guidelines.

Consequences of Non-Compliance ⚠️

Recent sanctions demonstrate that monetary penalties represent only the beginning of potential consequences. Courts now impose comprehensive remedial measures including striking deficient briefs, removing attorneys from cases, requiring individual apology letters to falsely attributed judges, and forwarding sanction orders to state bar associations for disciplinary review. The Arizona court's requirement that attorney Bam notify every judge presiding over her active cases illustrates how sanctions can impact entire legal practices.

Professional discipline referrals create lasting reputational consequences that extend beyond individual cases. The Second Circuit's decision in Park v. Kim established that Rule 11 duties require attorneys to "read, and thereby confirm the existence and validity of, the legal authorities on which they rely". Failure to meet this standard reveals inadequate legal reasoning and can justify severe sanctions.

Final Thoughts - The Path Forward 🚀

Be a smart lawyer. USe AI wisely. Always check your work!

The ABA Journal's coverage of cases showing "justifiable kindness" for attorneys facing personal tragedies while committing AI errors highlights judicial recognition of human circumstances, but courts consistently maintain that personal difficulties do not excuse professional obligations. The trend toward harsher sanctions reflects judicial concern that lenient approaches have proven ineffective as deterrents.

Legal professionals must embrace transparent verification practices while acknowledging mistakes promptly when they occur. Courts consistently show greater leniency toward attorneys who immediately admit errors rather than attempting to defend indefensible positions. This approach maintains client trust while demonstrating professional integrity.

The evolving landscape requires legal professionals to balance technological innovation with fundamental ethical obligations. As Stanford research indicates that legal AI models hallucinate in approximately one out of six benchmarking queries, the imperative for rigorous verification becomes even more critical. Success in this environment demands both technological literacy and unwavering commitment to professional standards that have governed legal practice for generations.

MTC