MTC: Smart Recording, Client Secrets, and HeyPocket: What Every Lawyer Needs to Know in 2026 📱⚖️

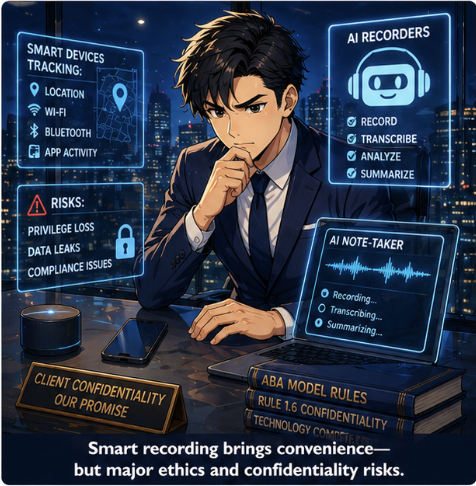

/Your smartphone and AI note‑taking tools now sit in on more client conversations than many junior associates.📱 They track where you are, who you talk to, and—if you let them—what you and your clients say in real time. For lawyers, that convenience comes with concrete privilege, confidentiality, and compliance risks that cannot be ignored.⚖️

Smart Devices, AI Note‑Takers, and Constant Surveillance 📍

Modern smart devices already log GPS coordinates, Wi‑Fi networks, Bluetooth connections, and app activity, creating a rich behavioral profile of you and your clients. Smart speakers and voice assistants listen for wake words, but they sometimes capture snippets of nearby conversations and send them to remote servers for processing. Fitness wearables, in‑car systems, and “always‑on” microphones further increase the volume of ambient data that can be collected.

Against that background, AI‑enabled recorders and summarizers like Pocket add a new layer: deliberate recording, transcription, and AI analysis of your conversations. Pocket is marketed as an AI‑powered “thought companion” and conversation recorder that creates searchable summaries and action items; by design it captures each conversation as its own object to improve clarity and support consent‑based use. For a busy lawyer, this is appealing—automatic notes, organized insights, and fewer missed follow‑ups.🤖

Yet the same capabilities that make HeyPocket useful also make it ethically sensitive. You are no longer just allowing your phone to passively log metadata; you are actively routing client speech through a third‑party AI stack that stores and processes that data, subject to its own privacy policy, security posture, and retention rules.

ABA Model Rules: Competence, Confidentiality, and Truthfulness ⚖️

The ABA Model Rules already give you a clear framework for evaluating whether and how to use tools like HeyPocket in practice.

Model Rule 1.1 (Competence) and Comment 8 require lawyers to understand “the benefits and risks associated with relevant technology.” In this context, “relevant technology” includes AI‑driven recorders, their data flows, and their vendor terms. Using a tool you do not understand can be a competence problem, not just a convenience choice.⚠️

Model Rule 1.6 (Confidentiality) requires “reasonable efforts” to prevent unauthorized access or disclosure of client information, which now includes avoiding casual sharing of contacts, calendars, and conversations with apps or cloud services that may let humans review or monetize the data. Several state bar opinions already warn that lawyers may not simply click “Allow” when apps request access to contacts or case‑related data unless they determine the information will not be viewed by humans or transferred without client consent.

ABA Formal Opinion 477R outlines a risk‑based analysis for electronic communications, asking you to weigh sensitivity, likelihood of disclosure, cost of safeguards, impact on representation, client expectations, and requests for enhanced security. That same method applies directly to AI recorders: you must ask whether routing privileged discussions through an AI vendor is “reasonable” given the stakes of the matter.

ABA Formal Opinion 498 specifically calls out always‑listening smart devices and recommends disabling them during client communications to avoid unnecessary exposure to third parties. If you would mute Alexa for an intake call, you should think even more carefully before inviting an AI recording service into the room.

Model Rules 5.1 and 5.3 (supervision of lawyers and non‑lawyer assistants) also matter. If you roll out AI note‑takers firmwide, you must implement policies, training, and oversight to ensure that lawyers, staff, and vendors handle client data consistently with confidentiality obligations. And Rule 8.4(c) (prohibition on dishonesty or deception) can be implicated if you secretly record clients, witnesses, or opposing parties even in one‑party consent jurisdictions; at least one ethics authority has treated undisclosed recordings as unethical despite being legal.

When AI Recordings and Smart Data Become Evidence 🧾

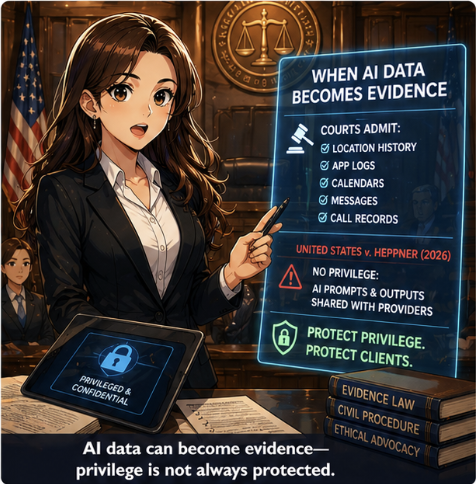

Courts have already embraced smart‑device data as evidence: location records, communication metadata, calendar entries, and app logs routinely appear in both criminal and civil litigation. Forensic tools can image a device and surface location histories, messages, and app‑generated artifacts that can reconstruct events with surprising precision.

AI tools are now entering that evidentiary picture. In United States v. Heppner (S.D.N.Y. 2026), a defendant’s use of a public AI platform to analyze his legal situation—and the documents he generated from those conversations—was held not to be protected by attorney‑client privilege or the work‑product doctrine. The court emphasized that the AI provider’s terms of service allowed collection and disclosure of prompts and outputs, so the defendant had no reasonable expectation of confidentiality.

The lesson for lawyers is direct: if you or your clients feed sensitive matter details into an AI recorder or note‑taker whose policies allow human review, secondary uses, or disclosure to third parties, privilege can be placed at risk. Vendor marketing language about security cannot substitute for a real review of actual terms, retention practices, and opt‑out mechanisms.heydata+3

Using HeyPocket and Similar Tools Ethically in Practice 🎙️

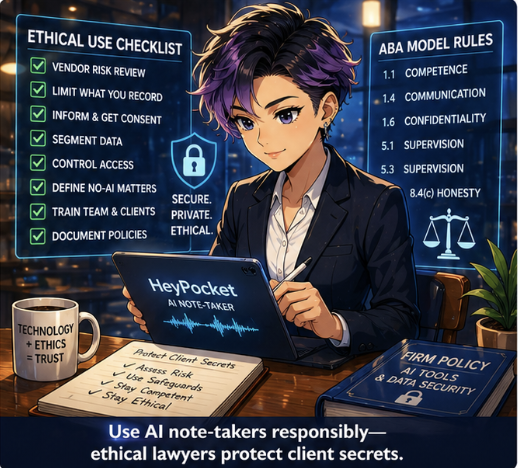

Ethical use of HeyPocket and similar tools is possible, but it is not “plug‑and‑play.” You should treat these platforms more like outsourced e‑discovery vendors than like harmless productivity apps.✅

Key practical steps include:

Perform a documented vendor risk review. Read the privacy policy and data‑processing terms to see what is recorded, how long it is stored, whether data is used to train models, and what rights you and your clients have to delete or export recordings. Confirm that access is logged and limited, and that data is encrypted in transit and at rest.

Limit what you record. Default to not recording privileged conversations unless you have a clear, articulable reason, a defensible risk assessment, and—in higher‑risk matters—informed client consent. Use tools like HeyPocket in lower‑sensitivity contexts (internal debriefs, CLE notes, public presentations) rather than as an automatic recorder of all client meetings.

Use explicit disclosures and consent. In many jurisdictions, recording requires the consent of all parties; even where only one‑party consent is required, an undisclosed recording can still trigger ethical concerns. A short, plain‑language explanation (“We use an AI note‑taking assistant that will record and transcribe this call; here is how we protect your information…”) respects client autonomy and supports informed consent under Model Rules 1.4 and 1.6.

Segment data and control access. Configure firm accounts so that recordings are tied to matters, not to individuals’ personal devices wherever possible. Restrict who can review recordings and summaries, and enforce role‑based permissions consistent with Rule 5.1 and 5.3 obligations.

Define bright‑line “no AI” categories. Certain matters—criminal defense, internal investigations, sensitive family or immigration cases, high‑value trade secret disputes—may warrant a categorical ban on AI recorders because the downside of any leak is catastrophic. Document these categories in your technology and confidentiality policies.

Train your team and your clients. Explain to lawyers, staff, and key clients that not every AI interaction is confidential or privileged and that using consumer‑grade tools on their own may waive important protections. Encourage clients to avoid entering matter‑specific facts into public AI systems without discussing it with you first.

Approached this way, a tool like HeyPocket can be used as a controlled, auditable note‑taking assistant rather than a stealth surveillance risk. The ethical question is not “AI recorder: yes or no?” but “Under what conditions, with what safeguards, and in which matters, if any, is this tool a reasonable choice?”

Technology Competence as a Continuous Obligation 🚀

Technology will only grow more invasive, more ambient, and more tightly integrated with everyday law practice.📈 ABA and state bar guidance increasingly treats technology competence as an ongoing duty, tied directly to confidentiality, supervision, and even malpractice exposure. Smart devices and AI platforms are not going away, so opting out entirely is rarely realistic.

For lawyers with limited to moderate technical skills, the path forward is practical: build a short, repeatable checklist for evaluating tools; lean on reputable vendors with clear, lawyer‑friendly terms; seek help from cybersecurity professionals when stakes are high; and treat client confidentiality as the non‑negotiable anchor for every technology decision. When you do that, you can leverage products like HeyPocket to improve focus and memory while still honoring the core promise that underlies every engagement letter: your client’s secrets stay safe.🔐

MTC