When Your AI Thinks It’s 1930: How Lawyers Must Manage “Frozen” Data Sets Versus the Live Internet 🧠⚖️

/AI Legal Research Demands Current Data and Human Judgment

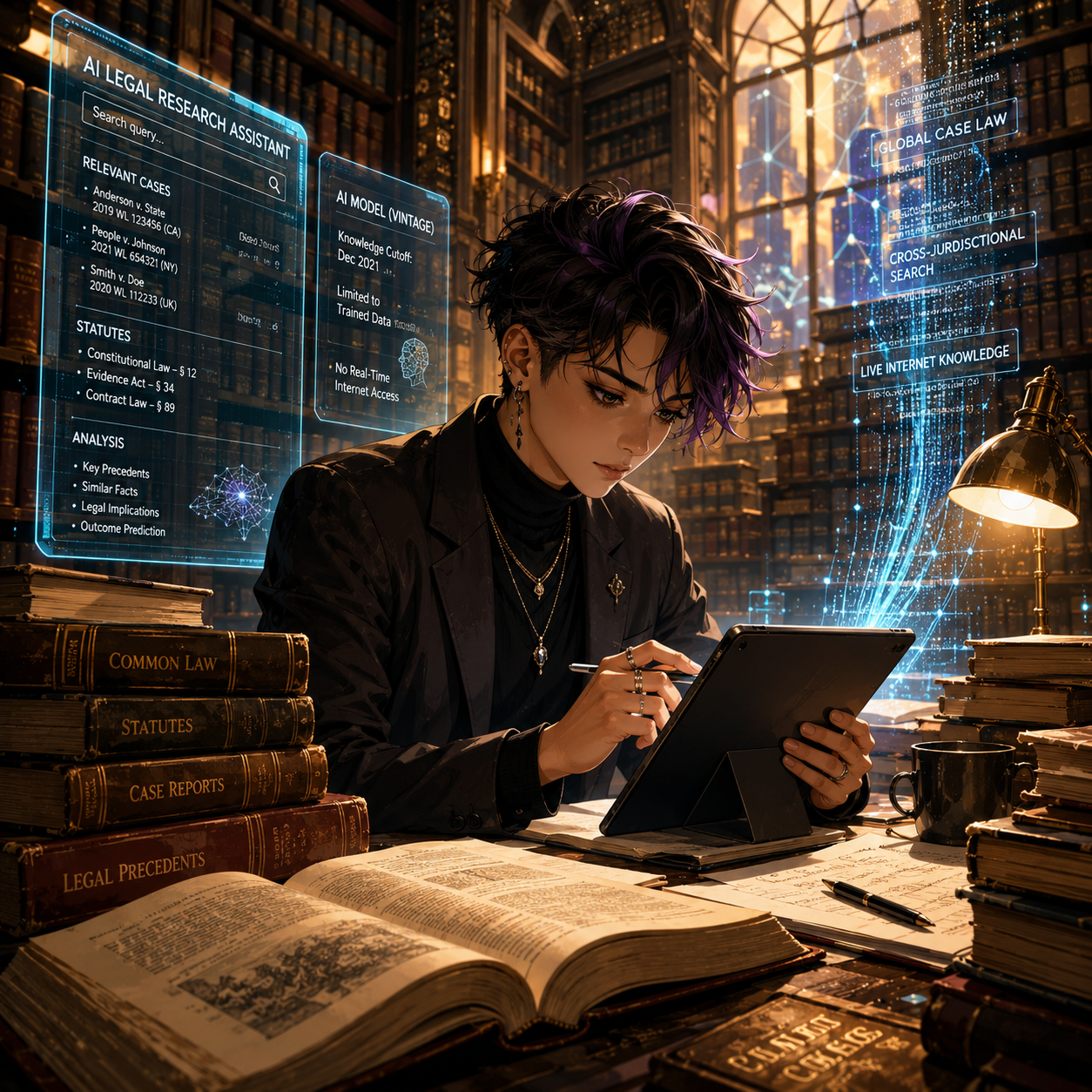

A recent Malwarebytes article profiled “Talkie,” a 13‑billion‑parameter chatbot trained only on English‑language texts published before 1931. This model has no knowledge of anything after the Great Depression—no email, no smartphones, no cybercrime, and certainly no modern e‑discovery.

For lawyers, Talkie is more than a curiosity. It is a vivid illustration of what happens when an AI’s world stops at an arbitrary date, and why we must understand the difference between isolated data sets and models that continuously ingest the modern internet. That distinction goes straight to your duties of competence, confidentiality, supervision, and candor under the ABA Model Rules.

On The Tech‑Savvy Lawyer podcast, it is often discussed that “AI is the junior associate you don’t have to hire—but still have to supervise.” Talkie shows us what happens when that junior associate’s legal education ends in 1930. The lesson for your practice is simple: you cannot outsource judgment to any tool, especially one whose view of the world is frozen in time.

What “Vintage AI” Teaches Modern Lawyers 🕰️

Talkie was trained entirely on digitized books, newspapers, legal texts, and other publications in the public domain as of 1930, both to avoid modern copyright headaches and to explore how AI reasons without the internet. In other words, it is a deliberately isolated system: no post‑1930 statutes, no contemporary case law, no modern regulations.

That design makes Talkie an excellent analogy for every “walled garden” AI lawyers are now being sold—closed research tools, local models trained only on internal firm documents, or court‑approved systems limited to a curated corpus. These tools can be invaluable, but only if you understand three things:

What is in the data set.

What is deliberately excluded.

How often the corpus is refreshed—or if it ever is.

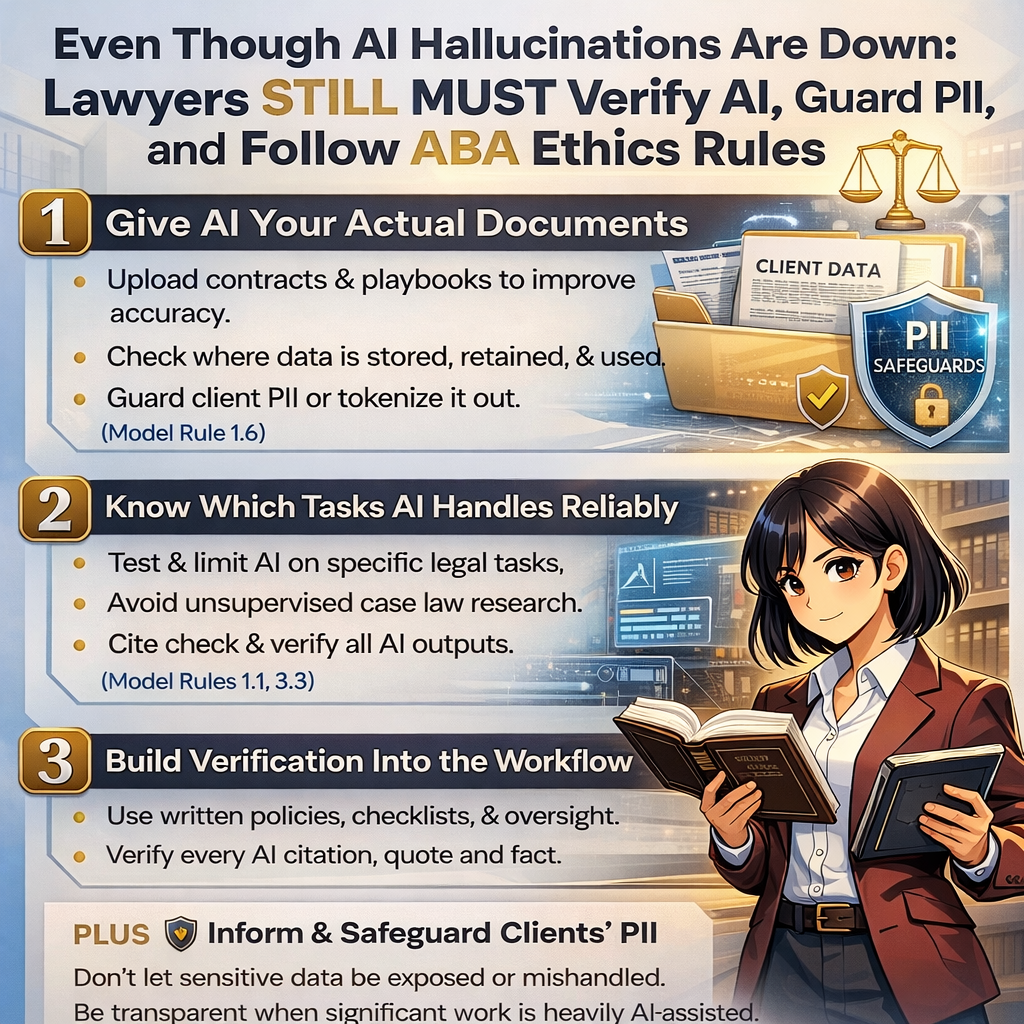

Model Rule 1.1’s duty of technological competence now explicitly includes understanding the “benefits and risks” of relevant technology, which in 2026 squarely includes AI trained on defined corpora. If you do not know what your AI has seen, you cannot competently rely on what it says.

Isolated Data Sets: The Upside for Lawyers ✅

Many solos and small firms are understandably drawn to “closed” or time‑boxed AI systems because they feel safer and more controllable. 😊 Properly designed, those systems can offer real advantages:

Predictable scope of authority

An AI trained only on a vetted body of primary law and secondary sources may be easier to supervise, because you know its universe of materials. You can design workflows where AI research is always checked against the underlying authorities that you recognize and trust.Reduced confidentiality and IP risk

Talkie avoids modern copyright disputes by staying within the public domain. Similarly, a local or on‑premises model that does not send data back to a vendor can help you satisfy Model Rule 1.6’s confidentiality obligations—assuming you confirm that the tool does not re‑use your client data to train others’ models.Consistent, auditable outputs

With an isolated corpus, it is often easier to log queries, outputs, and the underlying sources, which supports your obligations under Rules 5.1 and 5.3 to supervise both lawyers and non‑lawyer assistants, including AI tools.

For certain use cases—drafting from your own templates, summarizing client files, or querying only your firm’s knowledge base—a “frozen” or walled‑off model can be exactly the right approach.

The Hidden Risks of “Frozen” Knowledge 🚨

Lawyers Must Verify AI Case Summaries Before Court

The malware researchers emphasize that Talkie has “no concept” of anything after 1930. That is charming when it tries to explain a “smartphone” using the vocabulary of the telegraph age; it is malpractice waiting to happen if your research tool does the equivalent in a modern brief.

For lawyers, isolated or out‑of‑date data sets create at least four serious risks:

Outdated or incomplete law

A time‑boxed research tool can miss controlling authority, recent statutory amendments, or new regulations. Under Model Rules 1.1 and 3.3, you cannot rely on a system that stops short of the current law and then present its output as if it were complete.[5][10][3]Distorted factual context

An AI that has never “seen” modern technology, social conditions, or scientific developments will reason with blind spots that can undermine your factual investigations under Rules 1.1 and 1.3. Think about relying on a pre‑1931 lens for today’s cybersecurity, social media defamation, or veterans’ disability claims involving modern diagnostics.Invisible bias baked into old texts

Pre‑1931 materials, like any historical corpus, embed the social, racial, and gender biases of their era. A “vintage” model may reproduce those biases in ways that conflict with your obligations around fairness and anti‑discrimination, and could taint your client‑intake, hiring, or case‑evaluation workflows.False sense of safety

Because these systems are “limited,” lawyers may assume they are automatically compliant or “approved.” 😬 But ABA Formal Opinion 512 is clear: the existing rules—competence, confidentiality, communication, candor, supervision, and reasonable fees—apply equally to AI tools, regardless of their training set.

The message: isolation is not a substitute for judgment. It simply changes the error profile you must manage.

Live Internet Models: Power With Extra Liability 🌐

At the other end of the spectrum are AI tools connected to the live internet—systems that can pull from statutes, cases, news, and commentary that changed yesterday or this morning. They offer speed and breadth that solos and small firms could only dream of a few years ago.

But internet‑connected models also present their own set of concerns:

Hallucinations blended with real‑time data

Even when a system claims to be “citing live sources,” you still must verify every authority under Rules 1.1, 3.3, and 5.3. Courts and bars have already disciplined lawyers for filing AI‑generated briefs with fabricated citations.Ongoing confidentiality exposure

If the model sends prompts to remote servers, you must analyze data‑handling, retention, and training policies to comply with Rule 1.6. You may need to anonymize prompts, modify your engagement letters, or obtain informed consent for certain uses, as many bars and Formal Opinion 512 recommend.Dynamic but uncurated sources

Unlike a curated pre‑1931 corpus, the open web mixes reliable law with marketing pages, blog posts of dubious quality, and outright misinformation. Under Model Rule 1.1, you must treat AI‑surfaced content like any other secondary source: helpful, but never authoritative without independent confirmation.

The fact that a tool is “up to date” does not relieve you of your duty to be right. It just changes where the landmines are. 😄

Practical Guardrails for AI‑Curious Lawyers 🛠️

In a recent episode of The Tech‑Savvy Lawyer podcast with AI consultant Hamid Kohan, we discussed building an “AI‑ready” practice that treats these tools like supervised, specialized staff—not black boxes. Whether you use a Talkie‑style frozen model, a live internet assistant, or both, consider putting these guardrails in place:

Inventory your AI tools and their data sources

For each tool, document what data set(s) it uses (public domain only, commercial databases, firm documents, open web), how often it updates, and how it handles your data. This goes directly to your competence and confidentiality duties under Rules 1.1 and 1.6.Define “approved uses” in your firm policies

Under Rules 5.1 and 5.3, establish written guidance for lawyers and staff: e.g., “Use Tool A only for drafting internal outlines,” or “Use Tool B for brainstorming arguments, but never for final citations.” Train your team accordingly and revisit those policies quarterly.Mandate human verification of law and facts

Require that all AI‑generated citations, quotations, and factual assertions be checked against primary sources and the actual record before leaving the firm. That is how you satisfy Rules 1.1, 3.3, and your supervisory obligations.Be transparent with clients and courts

ABA guidance encourages disclosure of AI use where it is material to the representation or required by court rule. Consider adding a brief, plain‑English AI disclosure to your engagement letters and being prepared to describe, if asked, how you supervise AI‑assisted work.Avoid over‑reliance that dulls your own analysis

California’s guidance warns against delegating your professional judgment to generative AI or letting it replace your own research and critical thinking. Use AI as a springboard, not a crutch—an approach we have explored on The Tech-Savvy Lawyer.Page blog and podcast.

These steps are manageable even for solo and small‑firm lawyers with modest tech skills, and they align neatly with existing ethics frameworks. 💡

Choosing Between “Frozen” and “Live” AI: A Simple Matrix 📊

Frozen AI Data Sets Challenge Modern Legal Research

When should you prefer an isolated corpus, and when do you need the modern web? For many practices—especially for example, disability, administrative, and appellate work—the answer is “both,” but for different tasks.

Use isolated or internal models for:

Summarizing your client’s file or medical records.

Drafting from your own templates and prior briefs.

Issue‑spotting in areas where the governing law is baked into the tool and updated on a known schedule.

Use live internet‑connected models (with caution) for:

Brainstorming novel arguments and locating secondary sources.

Scanning for recent regulatory changes or commentary.

Getting “layperson‑level” explanations you then translate into lawyer‑grade analysis.

In every scenario, you remain the final filter. Under the Model Rules, AI can accelerate your work, but it cannot own your judgment. Talkie is a reminder that the scope of what your AI knows is now an ethics question, not just a technical detail.

Final Thoughts: Don’t Let Your Practice Get Stuck in 1930 ✨

Talkie’s charm lies in its limitations—it is a window into a world before the internet, World War II, and modern computing. Your law practice does not have that luxury. Clients expect you to understand the present, anticipate the future, and choose tools that serve both.

Whether your AI is frozen in 1930 or streaming 2026 in real time, the obligations are the same: know what it knows, know what it cannot know, and supervise it accordingly. If you do that, you can harness AI’s benefits without letting your ethical obligations slip into the past. 🚀