MTC: When the Search Engine Itself Is the Ethical Issue: What Lawyers Must Know About AI Search vs. Traditional Internet Research

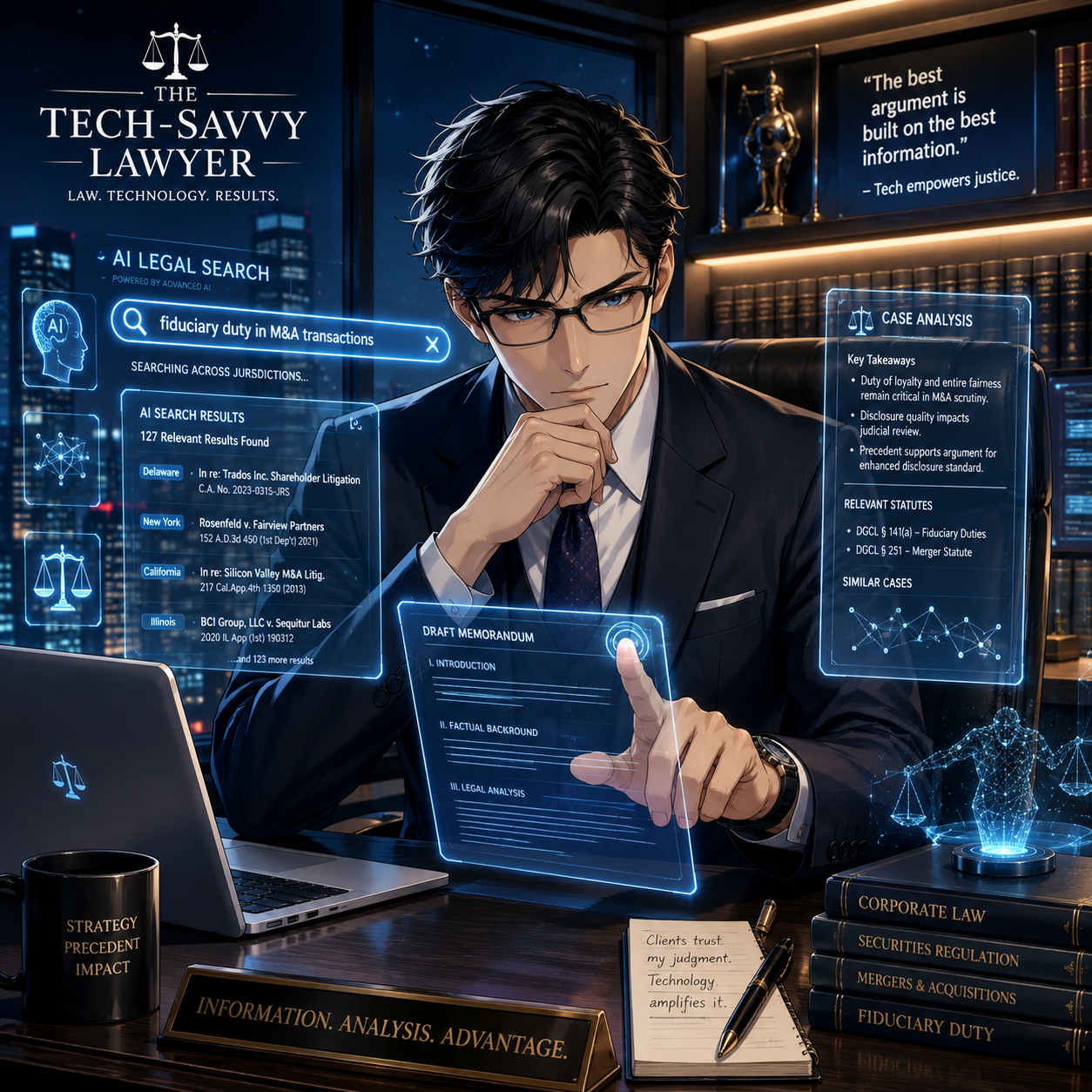

/AI Legal Search Transforms Modern Lawyer Research

There is a quiet revolution happening at the very top of your browser, and most lawyers haven't noticed it yet. 🔍

The search box — that deceptively simple rectangle you've used to research case law, check opposing counsel's background, or verify a client's claims — is no longer neutral ground. In May 2026, Google announced a sweeping AI-first reimagining of its search experience, complete with AI-generated answer summaries, "Search agents" that act on your behalf, and deep integrations with Gmail and Google Photos through what it calls "Personal Intelligence."

Almost immediately, something remarkable happened. Privacy-focused search engine DuckDuckGo reported that traffic to its "No AI" search option more than tripled in the days following Google's announcement. Visits averaged 84 percent above baseline — and climbing. Users are voting with their clicks, and lawyers should be paying close attention to why.

Because for attorneys, this isn't just a preference question. It is an ethics question. 🏛️

The Search Box Has Always Been a Legal Tool

Before we talk about AI search, let's be honest about something: lawyers have always used internet research in professionally complex ways. Whether you're doing due diligence on a new client, investigating facts before a deposition, or checking whether a potential expert witness has any embarrassing public statements, search engines are embedded in legal practice.

The ABA has taken note. ABA Model Rule 1.1 on Competence requires lawyers to keep abreast of "changes in the law and its practice, including the benefits and risks associated with relevant technology." The ABA's 2012 amendment to Comment 8 of that rule was, frankly, ahead of its time. Today, "relevant technology" includes the search engine itself — not just the results it returns.

The question lawyers must now ask is not just what a search engine finds. The question is how it finds it — and what it does to the information before it reaches your eyes. 👁️

What "AI Search" Actually Does — And Why It Matters for Lawyers

Google's new AI search doesn't just retrieve pages. It synthesizes, summarizes, and presents information as if it were a fact. The AI generates an "answer" at the top of the results, often without clearly displaying the sources behind it. It uses conversational follow-up prompts and can even tap into your personal data — your Gmail, your calendar, your search history — to "personalize" results through its Personal Intelligence features.

For a casual user looking up a dinner recipe, this may be delightful. For a lawyer performing professional research, this architecture introduces risks that are not hypothetical. They are disciplinary. ⚖️

Consider these practical scenarios:

Investigating a witness or opposing party: If AI search synthesizes social media profiles, news articles, and forum posts into a single summary, is the attorney seeing an accurate picture — or an AI-curated composite? Errors of omission matter enormously in litigation.

Researching local ordinances or regulations: AI-generated summaries have been documented to cite outdated legal authority or blend jurisdictions. A confident-sounding AI answer about a zoning statute may be silently wrong.

Client intake due diligence: If your search engine is pulling from your own Gmail history to "personalize" results, there are immediate questions about information separation and confidentiality walls.

This implicates ABA Model Rule 1.3 (Diligence), Rule 1.6 (Confidentiality), and — for litigators — the broader duty of candor under Rule 3.3. Relying on an AI-synthesized result without independent verification is not diligent research. It is relying on someone else's summary of someone else's sources. 🚩

The Competence Gap Nobody's Talking About

AI Case Summaries Enter the Modern Courtroom

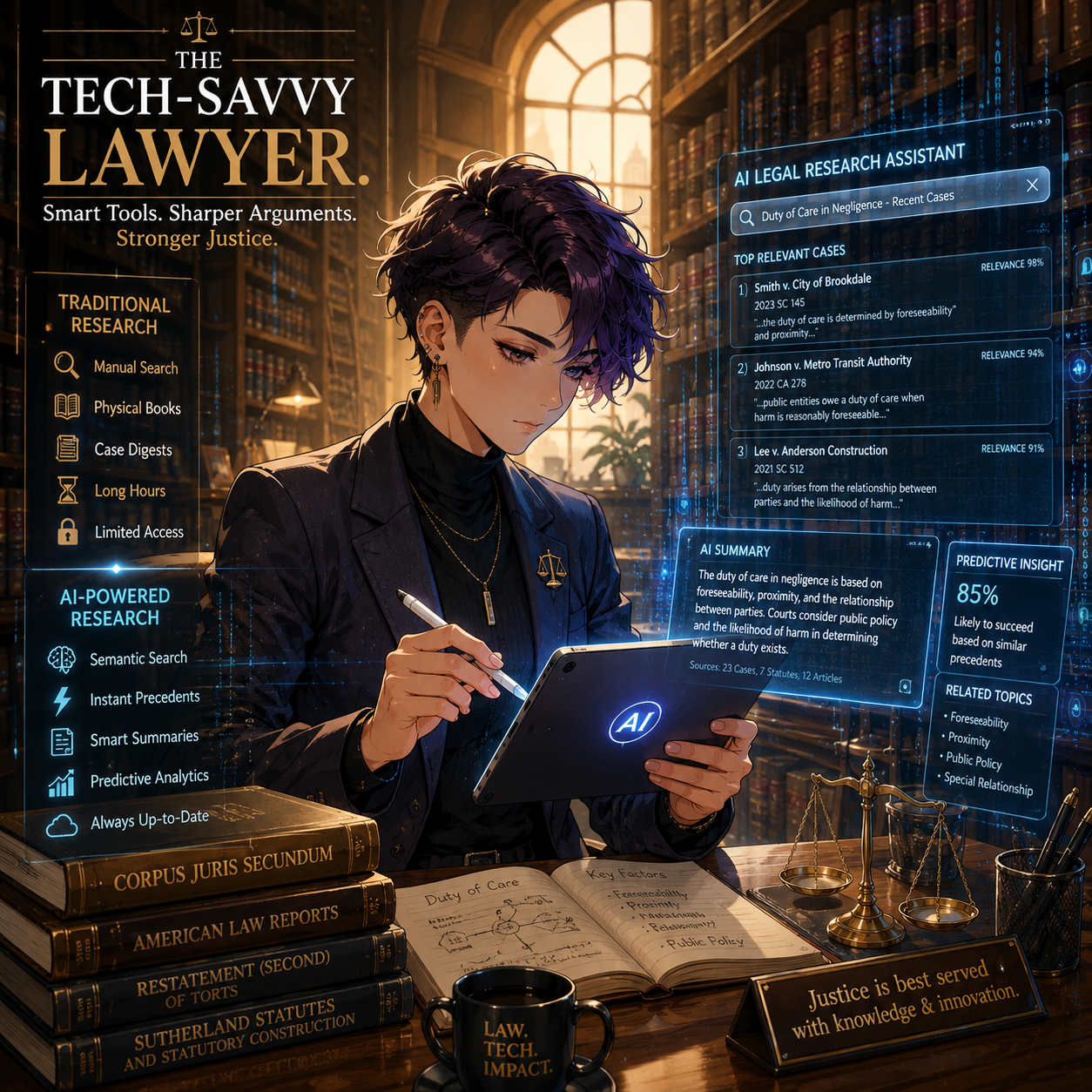

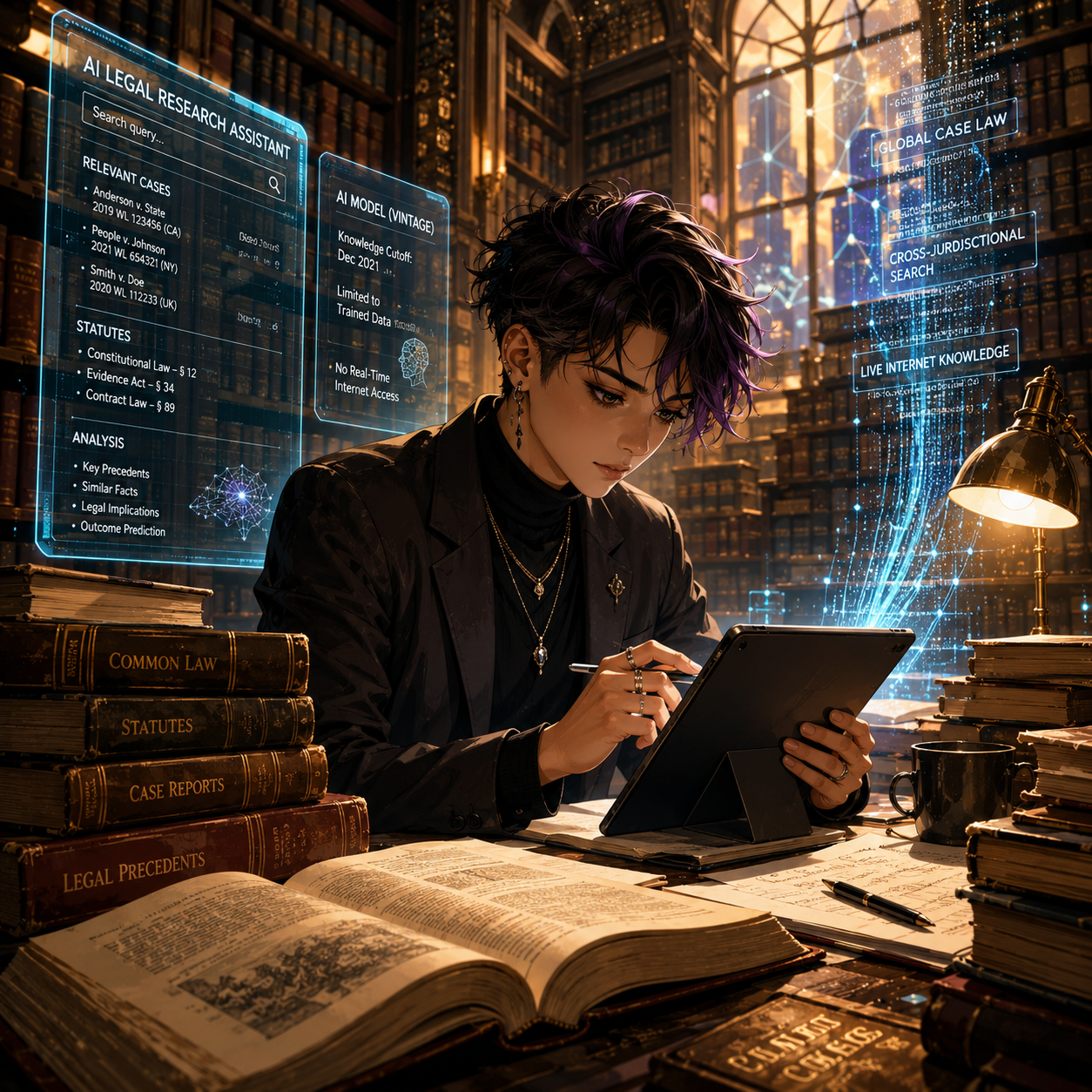

Here is the nuance that most bar ethics opinions haven't caught up to yet: using AI search is not the same as using an AI legal research tool like Westlaw AI and Lexis+ AI. Those platforms are built on curated, citation-verified legal databases, with clear provenance for every source. General-purpose AI search like Google's new paradigm, Bing Copilot, Perplexity AI, is built on the open web, with all of the unreliability that implies.

When a lawyer asks Westlaw AI to find authority on a legal standard, the system is drawing from a professionally maintained legal corpus. When a lawyer asks Google's AI search, "What are the statute of limitations rules in Virginia for contract claims?" — the AI is generating a confident-sounding answer from whatever it found on the open internet, synthesized by a model that does not practice law and has no malpractice insurance. *Note that this does not mean you should not always check your AI work generated from legal-based websites, as they make mistakes too! ALWAYS CHECK YOUR WORK!!!

That distinction is not just academic. It is the difference between competent research and a disciplinary complaint. 📋

ABA Formal Opinion 512 (2023) addressed the use of generative AI tools broadly, emphasizing that attorneys bear full responsibility for the accuracy of AI-generated work product and may not "delegate" verification to a machine. The same logic extends directly to AI-generated search summaries. The attorney who reads an AI answer and relies on it without checking the underlying sources has not completed professional research.

DuckDuckGo's "No AI" Option: A Signal Worth Heeding

The surge in DuckDuckGo's "No AI" search traffic is instructive for lawyers precisely because the users driving that surge aren't Luddites. They are professionals and technologists who understand the difference between AI-assisted search and raw, unmediated results.

DuckDuckGo's No AI search returns traditional link-based results without AI-generated answer overlays, without a chat interface, and with significantly fewer AI-generated images cluttering the results. For legal professionals performing factual investigation, that architecture has a significant advantage: what you see is a list of sources, not a synthesized narrative. You evaluate the sources. You apply legal judgment. The machine does not pre-filter reality for you.

Alternative privacy-first search engines like Kagi operate on a similar premise — paid, ad-free, with AI tools strictly opt-in. These are not fringe products. They are increasingly mature, professional-grade tools.

The point is not that lawyers must abandon Google. The point is that lawyers must understand what any given search tool is doing with their query and their results — and make a deliberate professional choice. 🎯

Confidentiality Implications Hiding in Plain Sight

Here's a dimension that deserves its own continuing legal education session: what happens to your search queries?

Google's Personal Intelligence features explicitly connect your search behavior to your Gmail, your Google Photos, and your account activity. For most users, this integration is a convenience. For lawyers, it is a potential Rule 1.6 problem.

If you are searching for information related to a client matter using a Google account connected to your professional email, you may be feeding client-related data into a system with its own data retention, analytics, and AI training policies. This is not speculation. It is the documented architecture of modern AI-integrated search.

The same risk applies to any AI search tool that logs, retains, or uses your queries for model training. Before using an AI search tool for client-related research, lawyers should review that platform's terms of service and privacy policy with the same scrutiny they'd apply to a cloud storage agreement.

A Practical Framework for the Ethically Conscious Lawyer

Here's what I recommend to every attorney I speak with — 🛠️

2. Verify every AI-generated summary. If an AI search tool gives you a synthesized answer, treat it as a lead, not a conclusion. Click through to primary sources. Confirm the date, jurisdiction, and accuracy of every material fact.

3. Audit your search tool's data practices. Before using any search engine — AI-powered or otherwise — for client-related research, understand what the platform does with your queries. Update your firm's privacy policy and client engagement letters accordingly.

4. Create a firm search policy. Solo practitioners and small firms alike benefit from a standard for how internet research is conducted, documented, and verified. Ideally it is written as it could be your first line of defense in a grievance proceeding.

5. Distinguish between research and investigation. When using internet research to investigate persons — clients, witnesses, opposing parties — remember that ABA Formal Opinion 466 addresses the ethical limits of reviewing publicly available juror social media. Similar caution applies to using AI-curated profiles of any individual.

The Bigger Picture 🌐

Tech-Savvy Lawyers Blend Tradition With Innovation

The DuckDuckGo story is not really about one search engine. It is about a profession — ours — navigating a moment when the most basic research infrastructure is being restructured around artificial intelligence, without a pause for professional reflection.

Lawyers are custodians of facts, advocates for truth, and officers of the court. The tools we use to find facts are not ethically neutral. They never were. But the gap between "good enough for a general user" and "professionally adequate for a licensed attorney" has never been wider.

The next time you open a browser tab to research something for a client, I want you to pause — just for a moment — and ask yourself: Do I know what this search engine is doing with my query right now? 🤔

If the answer is "I'm not sure," that pause just became an ethical obligation.

MTC.